Contributing author: Tim Gardner

Editor’s note: This post originally appeared on PLOS Tech; it is republished here with permission.

From Gutenberg’s invention of the printing press to the Internet of today, technology has enabled faster communication, and faster communication has accelerated technology development. Today, we can zip photos from a mountaintop in Switzerland back home to San Francisco with hardly a thought, but that wasn’t so trivial just a decade ago. It’s not just selfies that are being sent; it’s also product designs, manufacturing instructions, and research plans — all of it enabled by invisible technical standards (e.g., TCP/IP) and language standards (e.g., English) that allow machines and people to communicate.

But in the laboratory sciences (life, chemical, material, and other disciplines), communication remains inhibited by practices more akin to the oral traditions of a blacksmith shop than the modern Internet. In a typical academic lab, the reference description of an experiment is the long-form narrative in the “Materials and Methods” section of a paper or a book. Similarly, industry researchers depend on basic text documents in the form of Standard Operating Procedures. In both cases, essential details of the materials and protocol for an experiment are typically written somewhere in a long-forgotten, hard-to-interpret lab notebook (paper or electronic). More typically, details are simply left to the experimenter to remember and to the “lab culture” to retain.

At the dawn of science, when a handful of researchers were working on fundamental questions, this may have been good enough. But nowadays this archaic method of protocol record keeping and sharing is so lacking that half of all biomedical studies are estimated to be irreproducible, wasting $28 billion each year of U.S. government funding. With more than $400 billion invested each year in biological and chemical research globally, the full cost of irreproducible research to the public and private sector worldwide could be staggeringly large.

One of the main sources of this problem is that there is no shared method for communicating unambiguous protocols, no standard vocabulary, and no common design. This makes it harder to share, improve upon, and reuse experimental designs — imagine if a construction company had no blueprints to rely on, only ad hoc written documents describing their project. That’s more-or-less where science is today. It makes it hard, if not impossible, to compare and extend experiments run by different people and at different times. It also leads to unidentified data errors and missed observations of fundamental import.

To address this gap, we set out at Riffyn to give lab researchers the software design tools to communicate their work as effortlessly as sharing a Google doc, and with the precision of computer-aided design. [Disclosure: O’Reilly AlphaTech Ventures is an investor in Riffyn.] But precise designs are only useful for communication if the underlying vocabulary is broadly understood. It occurred to us that development of such a common vocabulary is an ideal open source project.

To that end, Riffyn teamed-up with PLOS to create ResourceMiner. ResourceMiner is an open source project to use natural-language processing tools and a crowdsourced stream of updates to create controlled vocabularies that adapt to researchers’ experiments and continually incorporate new materials and equipment as they come into use.

A number of outstanding projects have produced, or are producing, standardized vocabularies (BioPortal, Allotrope, Global Standards Institute, Research Resource Initiative). However, the standards are constantly battling to stay current with the shifting landscape of practical use patterns. Even a standard that is extensible by design needs to be manually extended. ResourceMiner aims to build on the foundations of these projects and extend them by mining the incidental annotation of terminology that occurs in the scientific literature — a sort of indirect crowdsourcing of knowledge.

We completed the first stage of ResourceMiner during the second annual Mozilla Science Global Sprint in early June. The goal of the Global Sprint is to develop tools and lessons to advance open science and scientific communication, and this year’s was a rousing success (summary here). More than 30 sites around the world participated, including one at Riffyn for our project.

The base vocabulary for ResourceMiner is a collection of ontologies sourced from the National Center for Biomedical Ontology (BioPortal) and Wikipedia. We will soon incorporate ontologies from the Global Standards Initiative and the Research Resource Initiative as well. Our first project within ResourceMiner was to annotate this base vocabulary with usage patterns from subject-area tags available in PLOS publications. Usage patterns will enable more effective term search (akin to PageRank) within software applications.

Out of the full corpus of PLOS publications, about 120,000 papers included a specific methods section. The papers were loaded into a MongoDB instance and indexed on the Methods and Materials section for full-text searches. About 12,000 of 60,000 terms from the base vocabulary were matched to papers based on text string matches. The parsed papers and term counts can be accessed on our MongoDB server, and instructions on how to do that are in the project Github repo. We are incorporating the subject-area tags into Riffyn’s software to adapt search results to the user’s experiment and to nudge researchers into using the same vocabulary terms for the same real-world items. Riffyn’s software also provides users the ability to derive new, more precise terms as needed and then contribute those directly to the ResourceMiner database.

The next steps in the development of ResourceMiner (and where you can help!) are to (1) expand the controlled vocabulary of resources by text mining other collections of protocols, (2) apply subject tags to papers and protocols from other repositories based on known terms, and (3) add term counts from these expanded papers and protocols to the database. During the sprint, we identified two machine learning tools that could be useful in these efforts, and could be explored further: the Named Entity Recognizer within Stanford NLP for new resource identification and Maui for topic identification. Our existing vocabulary and subject area database provide a set of training data.

Special thanks to Rachel Drysdale, Jennifer Lin, and John Chodaki at PLOS for their expertise, suggestions, and data resources; to Bill Mills and Kaitlin Thaney at Mozilla Science for their enthusiasm and facilitating the kick-off event for ResourceMiner; and to the contributors to ResourceMiner.

If you’d like to contribute to this project or have ideas for other applications of ResourceMiner, please get in touch! Check out our project GitHub repo, in particular the wiki and issues, or contact me at mcarr@riffyn.com.

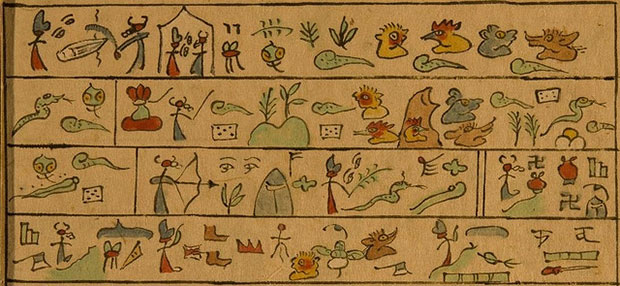

Image on article and category pages via Paul K on Flickr, used under a Creative Commons license.