"Big Data Sensor Networks and Distributed Computation" entries

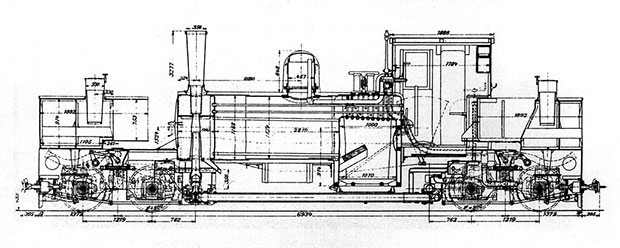

How trains are becoming data driven

Railways are at the intersection of Internet and industry.

Trains and public transport are, for many of us, a vital part of our daily lives. Large cities are particularly dependent on an efficient public transport system, and if disruption occurs, it usually affects many passengers while spreading across the transport network. But our requirements as passengers are growing and maturing. Safety is paramount, but we also care about timeliness, comfort, Internet access, and other amenities. With strong competition for regional and long-distance trains, providing an attractive service has become critical for many rail operators today.

The railway industry is an old industry. For the last 150 years, this industry was built around mechanical systems maintained throughout a lifetime of 30 years, mostly through reactive or preventive maintenance. But this is not enough anymore to deliver the type of service we all want and expect to experience.

Deriving insight from the data of trains

Over the last few years, the rail industry has been transforming itself, embracing IT, digitalization, big data, and the related changes in business models. This change is driven both by the railway operating companies demanding higher vehicle and infrastructure availability, and, increasingly, wanting to transition their operational risk to suppliers. In parallel, the thought leaders among maintenance providers have embraced the technology opportunities to radically improve their offerings and help their customers deliver better value. Read more…

Announcing Cassandra certification

A new partnership between O’Reilly and DataStax offers certification and training in Cassandra.

I am pleased to announce a joint program between O’Reilly and DataStax to certify Cassandra developers. This program complements our developer certification for Apache Spark and — just as in the case of Databricks and Spark — we are excited to be working with the leading commercial company behind Cassandra. DataStax has done a tremendous job growing and nurturing the Cassandra community, user base, and technology.

I am pleased to announce a joint program between O’Reilly and DataStax to certify Cassandra developers. This program complements our developer certification for Apache Spark and — just as in the case of Databricks and Spark — we are excited to be working with the leading commercial company behind Cassandra. DataStax has done a tremendous job growing and nurturing the Cassandra community, user base, and technology.

Once the certification program is ready, developers can take the exam online, in designated test centers, and at select training courses. O’Reilly will also be developing books, training days, and videos targeted at developers and companies interested in the Cassandra distributed storage system.

Cassandra is a popular component used for building big data and real-time analytic platforms. Its ability to comfortably scale to clusters with thousands of nodes makes it a popular option for solutions that need to ingest and make sense of large amounts of time series and event data. As noted in an earlier post, real-time event data are at the heart of one of the trends we’re closely following: the convergence of cheap sensors, fast networks, and distributed computation. Read more…

Now available: Big Data Now, 2014 edition

Our wrap-up of important developments in the big data field.

- Cognitive augmentation: As data processing and data analytics become more accessible, jobs that can be automated will go away. But to be clear, there are still many tasks where the combination of humans and machines produce superior results.

- Intelligence matters: Artificial intelligence is now playing a bigger and bigger role in everyone’s lives, from sorting our email to rerouting our morning commutes, from detecting fraud in financial markets to predicting dangerous chemical spills. The computing power and algorithmic building blocks to put AI to work have never been more accessible.

The Internet of Things has four big data problems

The IoT and big data are two sides of the same coin; building one without considering the other is a recipe for doom.

The Internet of Things (IoT) has a data problem. Well, four data problems. Walking the halls of CES in Las Vegas last week, it’s abundantly clear that the IoT is hot. Everyone is claiming to be the world’s smartest something. But that sprawl of devices, lacking context, with fragmented user groups, is a huge challenge for the burgeoning industry.

What the IoT needs is data. Big data and the IoT are two sides of the same coin. The IoT collects data from myriad sensors; that data is classified, organized, and used to make automated decisions; and the IoT, in turn, acts on it. It’s precisely this ever-accelerating feedback loop that makes the coin as a whole so compelling.

Nowhere are the IoT’s data problems more obvious than with that darling of the connected tomorrow known as the wearable. Read more…

The promise and problems of big data

A look at the social and moral implications of living in a deeply connected, analyzed, and informed world.

Editor’s note: this is an excerpt from our new report Data: Emerging Trends and Technologies, by Alistair Croll. You can download the free report here.

We’ll now look at both the light and the shadows of this new dawn, the social and moral implications of living in a deeply connected, analyzed, and informed world. This is both the promise and the peril of big data in an age of widespread sensors, fast networks, and distributed computing.

Solving the big problems

The planet’s systems are under strain from a burgeoning population. Scientists warn of rising tides, droughts, ocean acidity, and accelerating extinction. Medication-resistant diseases, outbreaks fueled by globalization, and myriad other semi-apocalyptic Horsemen ride across the horizon.Can data fix these problems? Can we extend agriculture with data? Find new cures? Track the spread of disease? Understand weather and marine patterns? General Electric’s Bill Ruh says that while the company will continue to innovate in materials sciences, the place where it will see real gains is in analytics.

It’s often been said that there’s nothing new about big data. The “iron triangle” of Volume, Velocity, and Variety that Doug Laney coined in 2001 has been a constraint on all data since the first database. Basically, you could have any two you want fairly affordably. Consider:

- A coin-sorting machine sorts a large volume of coins rapidly, but assumes a small variety of coins. It wouldn’t work well if there were hundreds of coin types.

- A public library, organized by the Dewey Decimal System, has a wide variety of books and topics, and a large volume of those books — but stacking and retrieving the books happens at a slow velocity.

What’s new about big data is that the cost of getting all three Vs has become so cheap it’s almost not worth billing for. A Google search happens with great alacrity, combs the sum of online knowledge, and retrieves a huge variety of content types. Read more…

Cheap sensors, fast networks, and distributed computing

The history of computing has been a constant pendulum — that pendulum is now swinging back toward distribution.

Editor’s note: this is an excerpt from our new report Data: Emerging Trends and Technologies, by Alistair Croll. You can download the free report here.

The trifecta of cheap sensors, fast networks, and distributing computing are changing how we work with data. But making sense of all that data takes help, which is arriving in the form of machine learning. Here’s one view of how that might play out.

Clouds, edges, fog, and the pendulum of distributed computing

The history of computing has been a constant pendulum, swinging between centralization and distribution.The first computers filled rooms, and operators were physically within them, switching toggles and turning wheels. Then came mainframes, which were centralized, with dumb terminals.

As the cost of computing dropped and the applications became more democratized, user interfaces mattered more. The smarter clients at the edge became the first personal computers; many broke free of the network entirely. The client got the glory; the server merely handled queries.

Once the web arrived, we centralized again. LAMP (Linux, Apache, MySQL, PHP) buried deep inside data centers, with the computer at the other end of the connection relegated to little more than a smart terminal rendering HTML. Load-balancers sprayed traffic across thousands of cheap machines. Eventually, the web turned from static sites to complex software as a service (SaaS) applications.

Then the pendulum swung back to the edge, and the clients got smart again. First with AJAX, Java, and Flash; then in the form of mobile apps, where the smartphone or tablet did most of the hard work and the back end was a communications channel for reporting the results of local action. Read more…