- Ubiquity — Sears Holdings has formed a new unit to market space from former Sears and Kmart retail stores as a home for data centers, disaster recovery space and wireless towers.

- Google Abandons Open Standards for Instant Messaging (EFF) — it has to be a sign of the value to users of open standards that small companies embrace them and large companies reject them.

- How Does Copyright Work in Space? (The Economist) — amazingly complex rights trail for the International Space Station-recorded cover of “Space Oddity”. Sample: Commander Hadfield and his son Evan spent several months hammering out details with Mr Bowie’s representatives, and with NASA, Russia’s space agency ROSCOSMOS and the CSA. That’s the SIMPLE HAPPY ENDING.

- Great Lessons: Evan Weinberg’s “Do You Know Blue?” (Dan Meyer) — It’s a bridge from math to computer science. Students get a chance to write algorithms in a language understood by both mathematicians and the computer scientists. It’s analogous to the Netflix Prize for grown-up computer scientists.

"standards" entries

MathML forges on

The standard for mathematical content in publishing work flows, technical writing, and math software

20 years into the web, math and science are still second class citizens on the web. While MathML is part of HTML 5, its adoption has seen ups and downs but if you look closely you can see there is more light than shadow and a great opportunity to revolutionize educational, scientific and technical communication.

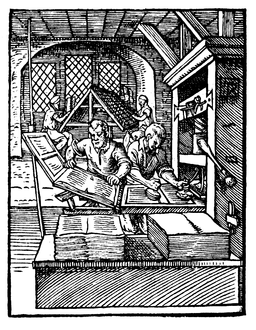

Somebody once compared the first 20 years of the web to the first 100 years of the printing press. It has become my favorite perspective when thinking about web standards, the web platform and in particular browser development. 100 years after Gutenberg the novel had yet to be invented, typesetting quality was crude at best and the main products were illegally copied pamphlets. Still, the printing press had revolutionized communication and enabled social change on a massive scale.

In the near future, all our current web technology will look like Gutenberg’s original press sitting next to an offset digital printing machine.

With faster and faster release cycles it is sometimes hard to keep in mind what is important in the long run—enabling and revolutionizing human communication.

Since I joined the MathJax team in 2012, I have gained many new perspectives on MathML, the web standard for display of mathematical content, and its role in making scientific content a first class citizen on the web. But it is rather useless to talk about MathML’s potential without knowing about the state of MathML on the web. So let’s tackle that in this post.

Security After the Death of Trust

Not just paying attention, but starting over

[contextly_sidebar id=”214edfe1c80f880bd3aa0ce4d78cf1d0″]

Security has to reboot. What has passed for strong security until now is going to be considered only casual security going forward. As I put it last week, the damage that has become visible over the past few months means that “we need to start planning for a computing world with minimal trust.”

So what are our options? I’m not sure if this ordering goes precisely from worst to best, but today this order seems sensible.

Stay the Course

This situation may not be that bad, right?

After the NSA Subverted Security Standards

Is protecting open processes possible?

I was somewhat surprised, despite my paranoia, by the extent of NSA data collection. I was very surprised, though, to find the New York Times reporting that NSA seems to have eased its data collection challenge by weakening security standards generally:

Simultaneously, the N.S.A. has been deliberately weakening the international encryption standards adopted by developers. One goal in the agency’s 2013 budget request was to “influence policies, standards and specifications for commercial public key technologies,” the most common encryption method.

Cryptographers have long suspected that the agency planted vulnerabilities in a standard adopted in 2006 by the National Institute of Standards and Technology and later by the International Organization for Standardization, which has 163 countries as members.

Classified N.S.A. memos appear to confirm that the fatal weakness, discovered by two Microsoft cryptographers in 2007, was engineered by the agency. The N.S.A. wrote the standard and aggressively pushed it on the international group, privately calling the effort “a challenge in finesse.”

The Guardian tells a similar story. It’s not just commercial software, where the path seemed direct, but open standards and software where it seems like it should have been harder.

I was very happy to wake up to a piece from the IETF emphasizing their commitment to strengthening security. There’s one problem, though, in its claim that:

IETF participants want to build secure and deployable systems for all Internet users

Last week’s revelations make it sadly clear that not all IETF participants are excited about creating genuinely secure systems.

Toward Responsive Web Programming

Creating flexible expectations

“Expect the unexpected” has long been a maxim of web development. New browsers and devices arrive, technologies change, and things break. The lore of web development isn’t just the technology: it addresses the many challenges of dealing with customers who want to lock everything down.

Matt Griffin (and a lot of others) reminded me of these difficulties at Artifact, and his Client Relationships and the Multi-Device Web brings it home for designers.

Is there room for programmers to tell a similar story?

I don’t mean agile. Agile development is difficult enough to explain to clients, but applications that adapt to their circumstances are a separate set of complications. Iterating on adaptable behaviors may be more difficult than iterating on adaptable designs, but it opens new possibilities both for applications and for the evolution of the Web.

Responsive Web Design is (slowly) becoming the new baseline, giving designers a set of tools for building pages that (usually) provide the same functionality while adapting to different circumstances. Programmers sometimes provide different functionality to different users, but it’s more often about cases where users have different privileges than about different devices and contexts.

Adjusting how content displays is complex enough, but modifying application behavior to respond to different circumstances is more unusual. The goal of most web development has been to provide a single experience across a variety of devices, filling in gaps whenever possible to support uniformity. The history of “this page best viewed on my preferred browser” is mostly ugly. Polyfills, which I think have a bright future, emerged to create uniformity where browsers didn’t.

Browsers, though, now provide a huge shared context. Variations exist, of course, and cause headaches, but many HTML5 APIs and CSS3 features can work nicely as supplements to a broader site. Yes, you could build a web app around WebRTC and Media Capture and Streams, and it would only run on Firefox and Chrome right now. But you could also use WebRTC to help users talk about content that’s visible across browsers, and only the users on Firefox and Chrome would have the extra video option. The Web Audio API is also a good candidate for this, as might be some graphics features.

This is harder, of course, with things like WebSockets that provide basic functionality. For those cases, polyfills seem like a better option. Something that seems as complicated and foundational as IndexedDB could be made optional, though, by switching whether data is stored locally or remotely (or both).

HTML5 and CSS3 have re-awakened Web development. I’m hoping that we can develop new practices that let us take advantage of these tools without having to wait for them to work everywhere. In the long run, I hope that will create a more active testing and development process to give browser vendors feedback earlier—but getting there will require changing the expectations of our users and customers as well.

Patients matter most, but technology matters a lot

Report from the Health Data Forum

Computing practices that used to be religated to experimental outposts are now taking up residence at the center of the health care field. From natural language processing to machine learning to predictive modeling, you see people promising at the health data forum (Health Datapalooza IV) to do it in production environments.

Four short links: 24 May 2013

Repurposing Dead Retail Space, Open Standards, Space Copyright, and Bridging Lessons

Designing resilient communities

Establishing an effective organization for large-scale growth

In the open source and free software movement, we always exalt community, and say the people coding and supporting the software are more valuable than the software itself. Few communities have planned and philosophized as much about community-building as ZeroMQ. In the following posting, Pieter Hintjens quotes from his book ZeroMQ, talking about how he designed the community that works on this messaging library.

How to Make Really Large Architectures (excerpted from ZeroMQ by Pieter Hintjens)

There are, it has been said (at least by people reading this sentence out loud), two ways to make really large-scale software. Option One is to throw massive amounts of money and problems at empires of smart people, and hope that what emerges is not yet another career killer. If you’re very lucky and are building on lots of experience, have kept your teams solid, and are not aiming for technical brilliance, and are furthermore incredibly lucky, it works.

But gambling with hundreds of millions of others’ money isn’t for everyone. For the rest of us who want to build large-scale software, there’s Option Two, which is open source, and more specifically, free software. If you’re asking how the choice of software license is relevant to the scale of the software you build, that’s the right question.

The brilliant and visionary Eben Moglen once said, roughly, that a free software license is the contract on which a community builds. When I heard this, about ten years ago, the idea came to me—Can we deliberately grow free software communities?

Four short links: 22 March 2013

HTML DRM, South Korean Cyberwar, Display Advertising BotNet, and Red Scares

- Defend the Open Web: Keep DRM Out of W3C Standards (EFF) — W3C is there to create comprehensible, publicly-implementable standards that will guarantee interoperability, not to facilitate an explosion of new mutually-incompatible software and of sites and services that can only be accessed by particular devices or applications. See also Ian Hickson on the subject. (via BoingBoing)

- Inside the South Korean Cyber Attack (Ars Technica) — about thirty minutes after the broadcasters’ networks went down, the network of Korea Gas Corporation also suffered a roughly two-hour outage, as all 10 of its routed networks apparently went offline. Three of Shinhan Bank’s networks dropped offline as well […] Given the relative simplicity of the code (despite its Roman military references), the malware could have been written by anyone.

- BotNet Racking Up Ad Impressions — observed the Chameleon botnet targeting a cluster of at least 202 websites. 14 billion ad impressions are served across these 202 websites per month. The botnet accounts for at least 9 billion of these ad impressions. At least 7 million distinct ad-exchange cookies are associated with the botnet per month. Advertisers are currently paying $0.69 CPM on average to serve display ad impressions to the botnet.

- Legal Manual for Cyberwar (Washington Post) — the main reason I care so much about security is that the US is in the middle of a CyberCommie scare. Politicians and bureaucrats so fear red teams under the bed that they’re clamouring for legal and contra methods to retaliate, and then blindly use those methods on domestic disobedience and even good citizenship. The parallels with the 50s and McCarthy are becoming painfully clear: we’re in for another witch-hunting time when we ruin good people (and bad) because a new type of inter-state hostility has created paranoia and distrust of the unknown. “Are you now, or have you ever been, a member of the nmap team?”