"strata" entries

On the importance of imagination in data science

Strata Community Profile on Amy Heineike, Director of Mathematics

According to Amy Heineike, the Director of Mathematics at Quid, there’s nothing like having a fresh dataset in R and knowing how to use it. “You can add a few lines of code and discover all kinds of interesting information,” Heineike says. “One question leads to another, you get into a flow, and you can have an amazing exploration.”

Heineike started working with data several years ago at a consultancy in London, where “playing around” with data shed light on the impact of social networks on government policies. Part of her job was figuring out what types of data to use in order to find solutions to crucial problems, from public transportation to obesity. Her day-to-day work at Quid entails working with new data sets, prototyping analytics, and collaborating with an engineering team to improve data analysis and bring products into production.

Why? Why? Why!

A lesson for data science teams.

By Dean Malmgren and Mike Stringer

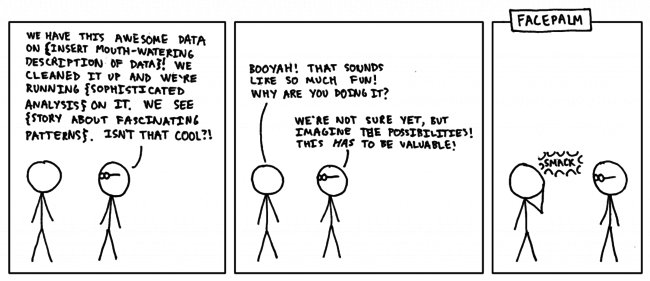

The other day we had a conversation with a bespectacled senior data scientist at another organization (named X to protect the innocent). The conversation went something like this:

Many of us have had similar conversations with people like X, and many of us have even been X before. Data scientists, being curious individuals, enjoy working on problems for the sake of doing something interesting, fun, technically challenging, or because their boss heard about “big data” in the Wall Street Journal. These reasons are all distinctly different from trying to solve an important problem.

Pursuing data science as a second profession

Featured Strata Community Profile on Yogi Saxena

Yogi Saxena is not one to back down from a challenge. The distance runner ran in his first marathon just two years ago in order to win a bet. Next month, he competes in another grueling marathon, his third. And if that were not enough, a friend’s Facebook post inspired him to train for a sprint triathalon. “I taught myself to swim when I was young,” Saxena says, revealing that his drive to learn new skills started early. “And if it wasn’t for the swim part, I’d have done an Olympic-distance triathlon instead.”

Yogi Saxena is not one to back down from a challenge. The distance runner ran in his first marathon just two years ago in order to win a bet. Next month, he competes in another grueling marathon, his third. And if that were not enough, a friend’s Facebook post inspired him to train for a sprint triathalon. “I taught myself to swim when I was young,” Saxena says, revealing that his drive to learn new skills started early. “And if it wasn’t for the swim part, I’d have done an Olympic-distance triathlon instead.”

Saxena’s love of mastering new challenges is likely responsible for his decision to pursue data science as a second profession, after having a successful career as an electrical engineer. Currently at Boeing, he is responsible for developing a tool that would help visualize feeds from various classified and non-classified sources.

He is profiled here as part of the Strata community profiles.

Tips and Tricks for Debugging Distributed Systems

Preview of upcoming session at the Strata Conference

I’m talking on Wednesday at Strata about Tips and Tricks for Debugging Distributed Systems. You should come check it out.

As a preview, let’s talk about two pretty pictures.

Network Visualization

I’m running some typical distributed systems (HDFS, MapReduce, Impala, HBase, Zookeeper) on a small, seven-node cluster. The diagram above has individual processes and the TCP connections they’ve established to each other. Some processes are “masters” and they end up talking to many other processes.

Big data is dead, long live big data: Thoughts heading to Strata

The biggest problems will almost always be those for which the size of the data is part of the problem.

A recent VentureBeat article argues that “Big Data” is dead. It’s been killed by marketers. That’s an understandable frustration (and a little ironic to read about it in that particular venue). As I said sarcastically the other day, “Put your Big Data in the Cloud with a Hadoop.”

You don’t have to read much industry news to get the sense that “big data” is sliding into the trough of Gartner’s hype curve. That’s natural. Regardless of the technology, the trough of the hype cycle is driven by by a familiar set of causes: it’s fed by over-agressive marketing, the longing for a silver bullet that doesn’t exist, and the desire to spout the newest buzzwords. All of these phenomena breed cynicism. Perhaps the most dangerous is the technologist who never understands the limitations of data, never understands what data isn’t telling you, or never understands that if you ask the wrong questions, you’ll certainly get the wrong answers.

Big data is not a term I’m particularly fond of. It’s just data, regardless of the size. But I do like Roger Magoulas’ definition of “big data”: big data is when the size of the data becomes part of the problem. I like that definition because it scales. It was meaningful in 1960, when “big data” was a couple of megabytes. It will be meaningful in 2030, when we all have petabyte laptops, or eyeglasses connected directly to Google’s yottabyte cloud. It’s not convenient for marketing, I admit; today’s “Big Data!!! With Hadoop And Other Essential Nutrients Added” is tomorrow’s “not so big data, small data actually.” Marketing, for better or for worse, will deal. Read more…

Sketching techniques for real-time big data

Preview of an upcoming session at Strata Santa Clara

In many modern web and big data applications the data arrives in a streaming fashion and needs to be processed on the fly. In these applications, the data is usually too large to fit in main memory, and the computations need to be done incrementally upon arrival of new pieces of data. Sketching techniques allow these applications to be realized with high levels of efficiency in memory, computation, and network communications.

In the algorithms research community, sketching techniques first appeared in the literature in 1980s, e.g., in the seminal work of Philippe Flajolet and G. Nigel Martin, then caught attentions in late 1990s, partially inspired by the award-winning work of Noga Alon, Yossi Matias, and Mario Szegedy, and were/are on fire in 2000’s/2010’s, when sketches got successfully designed not only for fundamental problems such as heavy hitters, but also for matrix computations, network algorithms, and machine learning. These techniques are now at an inflection point in the course of their history, due to the following factors:

1. Untapped potential: Being so new, their huge practical potential has been yet barely tapped into.

2. Breadth and maturity: They are now both broad and mature enough to start to be widely used across a variety of big data applications, and even act as basic building blocks for new highly efficient big data management systems.

Who do you trust? You are surrounded by bots.

Preview of upcoming session "Who is Fake?" at the Strata Conference

By Lutz Finger

In the Matrix, the idea of a computer algorithm determining what we think may seemed far-fetched. Really? Far-fetched? Let’s look at some numbers.

About half of all Americans get their news in digital form. This news is written up by journalists, half of whom at least partially source their stories from social media. They use tools to harvest the real time knowledge of 100,000 tweets per second and more.

But what if someone could influence those tools and create messages that look as though they were part of a common consensus? Or create the appearance of trending?

Keep your data science efforts from derailing

Preview of upcoming session at Strata Santa Clara

By Marck Vaisman and Sean Murphy

Is your organization considering embracing data science? If so, we would like to give you some helpful advice on organizational and technical issues to consider before you embark on any initiatives or consider hiring data scientists. Join us, Sean Murphy and Marck Vaisman, two Washington, D.C. based data scientists and founding members of Data Community DC, as we walk you through the trials and tribulations of practicing data scientists at our upcoming talk at Strata.

We will discuss anecdotes and best practices, and finish by presenting the results of a survey we conducted last year to help understand the varieties of people, skills, and experiences that fall under the broad term of “Data Scientist”. We analyzed data from over 250 survey respondents, and are excited to share our findings, which will also be published soon by O’Reilly.

Maps not lists: network graphs for data exploration

Preview of upcoming Strata session on data exploration

Amy Heineike is Director of Mathematics for Quid Inc, where she has been since its inception, prototyping and launching the company’s technology for analyzing document sets. Below is the teaser for her upcoming talk at Strata Santa Clara.

I recently discovered that my favorite map is online. It used to hang on my housemate’s wall in our little house in London back in 2005. At the time I was working to understand how London was evolving and changing, and how different policy or infrastructure changes (a new tube line, land use policy changes) would impact that.

The map was originally published as a center-page pull out from the Guardian, showing the ethnic groups that dominate different neighborhoods across the city. The legend was as long as the image, and the small print labels necessitated standing up close, peering and reading, tracing your finger to discover the Congolese on the West Green Road, our neighbors the Portuguese on the Stockwell Road, or the Tamils in Chessington in the distant south west.

Fruit or mobile device: learning concepts through connections

Preview of insights shared at upcoming session at Strata Santa Clara

Social media gives us the power to share content and engage with a wide range of internet users. As a person or brand, we are often concerned with who we are talking to and how we can better serve our viewers. Traditional demographics such as ‘female’ and ‘25-30’ are no longer sufficient in this arena. For example, Google is having a hard time getting gender and age correct for ad preferences. It is more interesting to observe what content is consumed and how attention changes over time.

Bitly, which is used to shorten and share links, can offer insight into this space. This means the data has an unprecedented view into what people are sharing and has a holistic view of what users are concerned about on the internet.

We use their data to look into how we can define the audience of different content. The simplest example of this is: given a group of users that click on “oreilly.com”, what other websites do they engage with. We now have what bitly calls a co-click graph. Domains are represented as nodes while edges between nodes represent the number of people that have clicked on each domain. A co-click graph can be made to represent any number of attributes, but for now we are going to remain interested in topics and keywords.