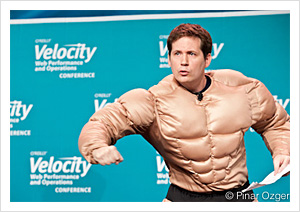

Velocity 2012 was a great experience: our best Velocity ever. Here are my personal highlights. No, they don’t include John Allspaw in the muscle suit.

Velocity 2012 was a great experience: our best Velocity ever. Here are my personal highlights. No, they don’t include John Allspaw in the muscle suit.

Bryan McQuade gave a great tutorial on understanding and optimizing web performance metrics. What I particularly appreciated is that he really started in the basement: in the TCP/IP stack, and in the hardware. Abstraction has served us well over the years; we’ve successfully pushed a lot of plumbing into the walls, where nobody has to look at it much. But this abstraction has brought its own problems. It’s all too easy for a web developer to spend a whole career dealing with fairly abstract APIs and standards at (or even above) the top of the TCP/IP stack, and to forget what’s going on at the lower levels. And to do so is tantamount to having water dripping out of the walls, but not knowing how to fix the plumbing. It’s more important than ever to understand how a TCP connection gets started, the importance of packet sizes, how DNS affects performance, and more. As devices and networks get faster, users aren’t getting more forgiving about performance, they’re getting less.

On Tuesday (June 26) and Wednesday (June 27), Dr. Richard Cook and Mike Christian presented two related keynotes. As far as I know, there was no prior coordination between them, but they fit together perfectly. Cook talked about the difference between systems as imagined (or designed), and systems as found in the real world. As he said the surprise isn’t that complex systems fail, but that they fail so rarely. We design for reliability, with multiple levels of redundancy and protection; but what we really want is resilience, the ability to withstand transients, and to recover swiftly when things go wrong. Can we build systems in advance (systems that we imagine) that have operational resistance, as found in the real world? That’s the problem, for web development and operations, as well as for medical systems.

Mike Christian’s keynote on Wednesday, “Frying Squirrels and Unspun Gyros,” was almost the perfect complement. In addition to lots of disaster porn, he showed us the way out of the predicament, the difference between systems as imagined and systems as they actually are. We build data centers with plenty of backups: UPS supplies, generators, all that. These systems are all extremely well designed, and as imagined, they ought to work. But as Amazon conveniently demonstrated just two days after Velocity ended, they don’t necessarily work in the real world. Christian pointed out that many data center outages are caused by problems in the backup systems; 29% are caused by UPS failures alone.

Given our poor track record at building systems that are really reliable, and given that all our efforts at reliability only lead to systems that are less reliable, what’s the alternative? Move up the stack, and build networks that are resilient: design software so that, when one data center goes down, load is automatically shifted to another data center in a different area. At that point, we can question whether we need backup systems at all. If, when the Virginia data center fails, load can shift to data centers in Oslo, Oregon, and Tokyo until the Virginia data center comes back online, do we really need to spend millions of dollars on backups that are actually making our systems less resilient?

It’s hard to leave Velocity without mentioning John Rauser’s talk. Rauser is one of my favorite speakers, and his talk focusing on the London Cholera epidemic of 1854 was a masterpiece. We’re all familiar with looking at summary statistics and ignoring the outlying data. Rauser demonstrated that the outliers are often the most important: they’re exactly what you need to prove your point. In the context of operations, rather than epidemiology, outliers often show the appearance of new failure mode. Look at the tail of your data; that’s where you’ll get a preview of your next outage, even if you’re not experiencing any problems now.

If you missed Velocity, you missed a great event. We’re looking forward to seeing you next year, and in the meantime, building a faster, stronger web.

Photo: John Allspaw by O’Reilly Conferences, on Flickr

Related: