- Fairness in Machine Learning — read this fabulous presentation. Most ML objective functions create models accurate for the majority class at the expense of the protected class. One way to encode “fairness” might be to require similar/equal error rates for protected classes as for the majority population.

- Safety Constraints and Ethical Principles in Collective Decision-Making Systems (PDF) — self-driving cars are an example of collective decision-making between intelligent agents and, possibly, humans. Damn it’s hard to find non-paywalled research in this area. This is horrifying for the list of things you can’t ensure in collective decision-making systems.

- State of Hardware Report (Nate Evans) — rundown of the stats related to hardware startups. (via Renee DiResta)

- A Recent Discussion about DRM (Joi Ito) — strong arguments against including Digital Rights Management in W3C’s web standards (I can’t believe we’re still debating this; it’s such a self-evidently terrible idea to bake disempowerment into web standards).

"Big Data" entries

Four short links: 7 April 2016

Fairness in Machine Learning, Ethical Decision-Making, State of Hardware, and Against Web DRM

Four short links: 18 March 2016

Engineering Traits, Box of Souls, Transport Data, and Tortilla Endofunctors

- Engineers of Jihad (Marginal Revolution) — brief book review, tantalizing. The distribution of traits across disciplines mirrors almost exactly the distribution of disciplines across militant groups…engineers are present in groups in which social scientists, humanities graduates, and women are absent, and engineers possess traits — proneness to disgust, need for closure, in-group bias, and (at least tentatively) simplism…

- Box of a Trillion Souls — review and critique of some of Stephen Wolfram’s writing and speaking about AI and simulation and the nature of reality and complexity and … a lot.

- Alphabet Starting Sidewalk Labs (NY Times) — “We’re taking everything from anonymized smartphone data from billions of miles of trips, sensor data, and bringing that into a platform that will give both the public and private parties and government the capacity to actually understand the data in ways they haven’t before,” said Daniel L. Doctoroff, Sidewalk’s chief executive, who is a former deputy mayor of New York City and former chief executive of Bloomberg. Data, data, data.

- SIGBOVIK — the proceedings from 2015 include a paper that talks about “The Tortilla Endofunctor.” You’re welcome.

Four short links: 7 March 2016

Trajectory Data Mining, Manipulating Search Rankings, Open Source Data Exploration, and a Linter for Prose.

- Trajectory Data Mining: An Overview (Paper a Day) — This is the data created by a moving object, as a sequence of locations, often with uncertainty around the exact location at each point. This could be GPS trajectories created by people or vehicles, spatial trajectories obtained via cell phone tower IDs and corresponding transmission times, the moving trajectories of animals (e.g. birds) fitted with trackers, or even data concerning natural phenomena such as hurricanes and ocean currents. It turns out, there’s a lot to learn about working with such data!

- Search Engine Manipulation Effect (PNAS) — Internet search rankings have a significant impact on consumer choices, mainly because users trust and choose higher-ranked results more than lower-ranked results. Given the apparent power of search rankings, we asked whether they could be manipulated to alter the preferences of undecided voters in democratic elections. They could. Read the article for their methodology. (via Aeon)

- Keshif — open source interactive data explorer.

- proselint — analyse text for sins of usage and abusage.

Using Apache Spark to predict attack vectors among billions of users and trillions of events

The O’Reilly Data Show podcast: Fang Yu on data science in security, unsupervised learning, and Apache Spark.

Subscribe to the O’Reilly Data Show Podcast to explore the opportunities and techniques driving big data and data science: Stitcher, TuneIn, iTunes, SoundCloud, RSS.

In this episode of the O’Reilly Data Show, I spoke with Fang Yu, co-founder and CTO of DataVisor. We discussed her days as a researcher at Microsoft, the application of data science and distributed computing to security, and hiring and training data scientists and engineers for the security domain.

DataVisor is a startup that uses data science and big data to detect fraud and malicious users across many different application domains in the U.S. and China. Founded by security researchers from Microsoft, the startup has developed large-scale unsupervised algorithms on top of Apache Spark, to (as Yu notes in our chat) “predict attack vectors early among billions of users and trillions of events.”

Several years ago, I found myself immersed in the security space and at that time tools that employed machine learning and big data were still rare. More recently, with the rise of tools like Apache Spark and Apache Kafka, I’m starting to come across many more security professionals who incorporate large-scale machine learning and distributed systems into their software platforms and consulting practices.

Four short links: 18 February 2016

Potteresque Project, Tumblr Teens, Hartificial Hand, and Denied by Data

- Homemade Weasley Clock (imgur) — construction photos of a clever Potter-inspired clock that shows where people are. (via Archie McPhee)

- Secret Lives of Tumblr Teens — teens perform joy on Instagram but confess sadness on Tumblr.

- Amazing Biomimetic Anthropomorphic Hand (Spectrum IEEE) — First, they laser scanned a human skeleton hand, and then 3D-printed artificial bones to match, which allowed them to duplicate the unfixed joint axes that we have […] The final parts to UW’s hand are the muscles, which are made up of an array of 10 Dynamixel servos, whose cable routing closely mimics the carpal tunnel of a human hand. Amazing detail!

- Life Insurance Can Gattaca You (FastCo) — “Unfortunately after carefully reviewing your application, we regret that we are unable to provide you with coverage because of your positive BRCA 1 gene,” the letter reads. In the U.S., about one in 400 women have a BRCA 1 or 2 gene, which is associated with increased risk of breast and ovarian cancer.

Four short links: 4 February 2016

Shmoocon Video, Smart Watchstrap, Generalizing Learning, and Dataflow vs Spark

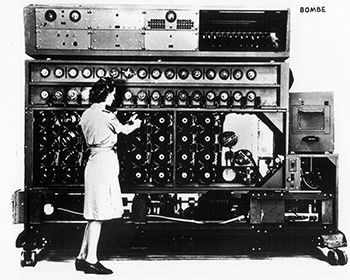

- Shmoocon 2016 Videos (Internet Archive) — videos of the talks from an astonishingly good security conference.

- TipTalk — Samsung watchstrap that is the smart device … put your finger in your ear to hear the call. You had me at put my finger in my ear. (via WaPo)

- Ecorithms — Leslie Valiant at Harvard broadened the concept of an algorithm into an “ecorithm,” which is a learning algorithm that “runs” on any system capable of interacting with its physical environment. Algorithms apply to computational systems, but ecorithms can apply to biological organisms or entire species. The concept draws a computational equivalence between the way that individuals learn and the way that entire ecosystems evolve. In both cases, ecorithms describe adaptive behavior in a mechanistic way.

- Dataflow/Beam vs Spark (Google Cloud) — To highlight the distinguishing features of the Dataflow model, we’ll be comparing code side-by-side with Spark code snippets. Spark has had a huge and positive impact on the industry thanks to doing a number of things much better than other systems had done before. But Dataflow holds distinct advantages in programming model flexibility, power, and expressiveness, particularly in the out-of-order processing and real-time session management arenas.

Four short links: 29 January 2016

LTE Security, Startup Tools, Security Tips, and Data Fiction

- LTE Weaknesses (PDF) — ShmooCon talk about how weak LTE is: a lot of unencrypted exchanges between handset and basestation, cheap and easy to fake up a basestation.

- Analyzo — Find and Compare the Best Tools for your Startup it claims. We’re in an age of software surplus: more projects, startups, apps, and tools than we can keep in our heads. There’s a place for curated lists, which is why every week brings a new one.

- How to Keep the NSA Out — NSA’s head of Tailored Access Operations (aka attacking other countries) gives some generic security advice, and some interesting glimpses. “Don’t assume a crack is too small to be noticed, or too small to be exploited,” he said. If you do a penetration test of your network and 97 things pass the test but three esoteric things fail, don’t think they don’t matter. Those are the ones the NSA, and other nation-state attackers will seize on, he explained. “We need that first crack, that first seam. And we’re going to look and look and look for that esoteric kind of edge case to break open and crack in.”

- The End of Big Data — future fiction by James Bridle.

Four short links: 28 January 2016

Augmented Intelligence, Social Network Limits, Microsoft Research, and Google's Go

- Chimera (Paper a Day) — the authors summarise six main lessons learned while building Chimera: (1) Things break down at large scale; (2) Both learning and hand-crafted rules are critical; (3) Crowdsourcing is critical, but must be closely monitored; (4) Crowdsourcing must be coupled with in-house analysts and developers; (5) Outsourcing does not work at a very large scale; (6) Hybrid human-machine systems are here to stay.

- Do Online Social Media Remove Constraints That Limit the Size of Offline Social Networks? (Royal Society) — paper by Robin Dunbar. Answer: The data show that the size and range of online egocentric social networks, indexed as the number of Facebook friends, is similar to that of offline face-to-face networks.

- Microsoft Embedding Research — To break down the walls between its research group and the rest of the company, Microsoft reassigned about half of its more than 1,000 research staff in September 2014 to a new group called MSR NExT. Its focus is on projects with greater impact to the company rather than pure research. Meanwhile, the other half of Microsoft Research is getting pushed to find more significant ways it can contribute to the company’s products. The challenge is how to avoid short-term thinking from your research team. For instance, Facebook assigns some staff to focus on long-term research, and Google’s DeepMind group in London conducts pure AI research without immediate commercial considerations.

- Google’s Go-Playing AI — The key to AlphaGo is reducing the enormous search space to something more manageable. To do this, it combines a state-of-the-art tree search with two deep neural networks, each of which contains many layers with millions of neuron-like connections. One neural network, the “policy network,” predicts the next move, and is used to narrow the search to consider only the moves most likely to lead to a win. The other neural network, the “value network,” is then used to reduce the depth of the search tree — estimating the winner in each position in place of searching all the way to the end of the game.

Building a business that combines human experts and data science

The O’Reilly Data Show podcast: Eric Colson on algorithms, human computation, and building data science teams.

Subscribe to the O’Reilly Data Show Podcast to explore the opportunities and techniques driving big data and data science.

In this episode of the O’Reilly Data Show, I spoke with Eric Colson, chief algorithms officer at Stitch Fix, and former VP of data science and engineering at Netflix. We talked about building and deploying mission-critical, human-in-the-loop systems for consumer Internet companies. Knowing that many companies are grappling with incorporating data science, I also asked Colson to share his experiences building, managing, and nurturing, large data science teams at both Netflix and Stitch Fix.

Augmented systems: “Active learning,” “human-in-the-loop,” and “human computation”

We use the term ‘human computation’ at Stitch Fix. We have a team dedicated to human computation. It’s a little bit coarse to say it that way because we do have more than 2,000 stylists, and these are very much human beings that are very passionate about fashion styling. What we can do is, we can abstract their talent into—you can think of it like an API; there’s certain tasks that only a human can do or we’re going to fail if we try this with machines, so we almost have programmatic access to human talent. We are allowed to route certain tasks to them, things that we could never get done with machines. … We have some of our own proprietary software that blends together two resources: machine learning and expert human judgment. The way I talk about it is, we have an algorithm that’s distributed across the resources. It’s a single algorithm, but it does some of the work through machine resources, and other parts of the work get done through humans.