- SpeechKITT — open source flexible GUI for interacting with Speech Recognition in your web app.

- The Humanities Majors Designing AI Interactions — who else are you going to get to do it? As in fiction, the AI writers for virtual assistants dream up a life story for their bots. Writers for medical and productivity apps make character decisions such as whether bots should be workaholics, eager beavers or self-effacing. “You have to develop an entire backstory — even if you never use it,” Ewing said.

- SCHAFT’s Bipedal Robot — not an Austin Powers reference, but a clever working proof-of-concept. In theory, bipedalism allows robots to go wherever we can (versus, say, a Dalek).

- Markets for Good — Information to drive social impact.

"open data" entries

Four short links: 11 April 2016

Speech GUI, AI Personality Design, Bipedal Robot, and Markets for Good

Four short links: 24 March 2016

Work and Home Github, Museum Data, Bandwidth Incentives, and Motion Design

- Maintain Separate Github Accounts — simple advice.

- Cooper-Hewitt Pen Data — anonymized data from the Cooper-Hewitt design museum’s fantastic pen.

- Zero Rating’s Problem — Wikipedia was zero-rated for Angola, so Angolans began swapping movies via Wikipedia. Zero rating (“no data charge for this service”) is an incentive to use the site, not necessarily for the purpose intended.

- Motion Design is the Future of UI — Motion tells stories. Everything in an app is a sequence, and motion is your guide. Someone caught the animations and transitions bug.

Four short links: 9 December 2015

Graph Book, Data APIs, Mobile Commerce Numbers, and Phone Labs

- Networks, Crowds, and Markets — network theory (graph analysis), small worlds, network effects, power laws, markets, voting, property rights, and more. A book that came out of a Cornell course by ACM-lauded Jon Kleinberg.

- Qu — a framework for building data APIs. From a government department, no less. (via Nelson Minar)

- Three Most Common M-Commerce Questions Answered (Facebook) — When we examined basket sizes on an m-site versus an app, we found people spend 43 cents in app to every $1 spent on m-site. (via Alex Dong)

- Phonelabs — science labs with mobile phones. All open sourced for maximum spread.

Four short links: 30 July 2015

Catalogue Data, Git for Data, Computer-Generated Handwriting, and Consumer Robots

- A Sort of Joy — MOMA’s catalogue was released under CC license, and has even been used to create new art. The performance is probably NSFW at your work without headphones on, but is hilarious. Which I never thought I’d say about a derivative work of a museum catalogue. (via Courtney Johnston)

- dat goes beta — the “git for data” goes beta. (via Nelson Minar)

- Computer Generated Handwriting — play with it here. (via Evil Mad Scientist Labs)

- Japanese Telcos vie for Consumer Robot-as-a-Service Business (Robohub) — NTT says Sota will be deployed in seniors’ homes as early as next March, and can be connected to medical devices to help monitor health conditions. This plays well with Japanese policy to develop and promote technological solutions to its aging population crisis.

Four short links: 1 July 2015

Recovering from Debacle, Open IRS Data, Time Series Requirements, and Error Messages

- Google Dev Apologies After Photos App Tags Black People as Gorillas (Ars Technica) — this is how you recover from a unequivocally horrendous mistake.

- IRS Finally Agrees to Release Non-Profit Records (BoingBoing) — Today, the IRS released a statement saying they’re going to do what we’ve been hoping for, saying they are going to release e-file data and this is a “priority for the IRS.” Only took $217,000 in billable lawyer hours (pro bono, thank goodness) to get there.

- Time Series Database Requirements — classic paper, laying out why time-series databases are so damn weird. Their access patterns are so unique because of the way data is over-gathered and pushed ASAP to the store. It’s mostly recent, mostly never useful, and mostly needed in order. (via Thoughts on Time-Series Databases)

- Compiler Errors for Humans — it’s so important, and generally underbaked in languages. A decade or more ago, I was appalled by Python’s errors after Perl’s very useful messages. Today, appreciating Go’s generally handy errors. How a system handles the operational failures that will inevitably occur is part and parcel of its UX.

Protecting health through open data management principles

Personal wellness data should be shared as freely as water and air.

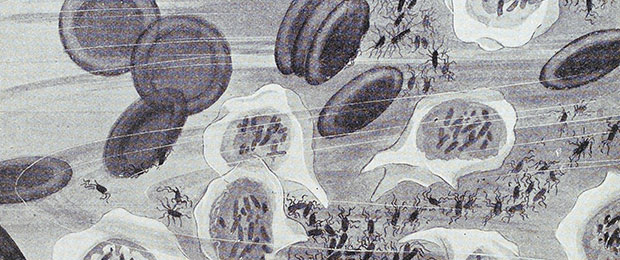

Register for the free webcast, “Life Streams, Walled Gardens, and the Internet of Living Things.” Brigitte Piniewski and Hagen Finley will discuss the Internet of Living Things, what makes sensoring and monitoring data emanating from our bodies unique, and why we should elect to participate in this seemingly Orwellian mistake of open-sourcing our personal health data.

We are at a threshold in the history of personal data. Sensors and apps are making it possible to generate digital data signatures of important aspects of healthy living, such as movement, nutrition, and sleep. However, we are rapidly losing the opportunity to erect a Linux-like open “living-well” data system steeped in open commons principles. We can either join together to ensure enlightened open source and crowdsourced discovery practices become the norm for our living-well data footprints, or we can passively allow this data to be sequestered into one of the walled gardens offered by health systems, funded research, or big business.

Why this is important?

Living-well data provides the map by which vast amounts of preventable human suffering can be prevented. Everyone can benefit from the health journeys of those who lived before us because our modern societies are no longer “accidentally well.” Decades ago, parents had no need to question the nutrition a child was offered or concern themselves with how much activity a child engaged in. No deliberate use of devices was needed to track these important health contributors. Reasonable access to whole foods (farm foods) and reasonable amounts of activity were provided, as it were, by default — in other words, by accident. This resulted in remarkably low rates of chronic disease. Today, communities cannot take those healthy choices for granted — we are no longer accidentally well. Read more…

Four short links: 24 February 2015

Open Data, Packet Dumping, GPU Deep Learning, and Genetic Approval

- Wiki New Zealand — open data site, and check out the chart builder behind the scenes for importing the data. It’s magic.

- stenographer (Google) — open source packet dumper for capturing data during intrusions.

- Which GPU for Deep Learning? — a lot of numbers. Overall, I think memory size is overrated. You can nicely gain some speedups if you have very large memory, but these speedups are rather small. I would say that GPU clusters are nice to have, but that they cause more overhead than the accelerate progress; a single 12GB GPU will last you for 3-6 years; a 6GB GPU is plenty for now; a 4GB GPU is good but might be limiting on some problems; and a 3GB GPU will be fine for most research that looks into new architectures.

- 23andMe Wins FDA Approval for First Genetic Test — as they re-enter the market after FDA power play around approval (yes, I know: one company’s power play is another company’s flouting of safeguards designed to protect a vulnerable public).

Four short links: 1 January 2015

Wearables Killer App, Open Government Data, Gender From Name, and DVCS for Geodata

- Killer App for Wearables (Fortune) — While many corporations are still waiting to see what the “killer app” for wearables is, Disney invented one. The company launched the RFID-enabled MagicBands just over a year ago. Since then, they’ve given out more than 9 million of them. Disney says 75% of MagicBand users engage with the “experience”—a website called MyMagic+—before their visit to the park. Online, they can connect their wristband to a credit card, book fast passes (which let you reserve up to three rides without having to wait in line), and even order food ahead of time. […] Already, Disney says, MagicBands have led to increased spending at the park.

- USA Govt Depts Progress on Open Data Policy (labs.data.gov) — nice dashboard, but who will be watching it and what squeeze will they apply?

- globalnamedata — We have collected birth record data from the United States and the United Kingdom across a number of years for all births in the two countries and are releasing the collected and cleaned up data here. We have also generated a simple gender classifier based on incidence of gender by name.

- geogig — an open source tool that draws inspiration from Git, but adapts its core concepts to handle distributed versioning of geospatial data.

We need open models, not just open data

If you really want to understand the effect data is having, you need the models.

Writing my post about AI and summoning the demon led me to re-read a number of articles on Cathy O’Neil’s excellent mathbabe blog. I highlighted a point Cathy has made consistently: if you’re not careful, modelling has a nasty way of enshrining prejudice with a veneer of “science” and “math.”

Cathy has consistently made another point that’s a corollary of her argument about enshrining prejudice. At O’Reilly, we talk a lot about open data. But it’s not just the data that has to be open: it’s also the models. (There are too many must-read articles on Cathy’s blog to link to; you’ll have to find the rest on your own.)

You can have all the crime data you want, all the real estate data you want, all the student performance data you want, all the medical data you want, but if you don’t know what models are being used to generate results, you don’t have much. Read more…

Four short links: 4 November 2014

3D Shares, Autonomous Golf Carts, Competitive Solar, and Interesting Data Problems

- Cooper-Hewitt Shows How to Share 3D Scan Data Right (Makezine) — important as we move to a web of physical models, maps, and designs.

- Singapore Tests Autonomous Golfcarts (Robohub) — a reminder that the future may not necessarily look like someone used the clone tool to paint Silicon Valley over the world.

- Solar Hits Parity in 10 States, 47 by 2016 (Bloomberg) — The reason solar-power generation will increasingly dominate: it’s a technology, not a fuel. As such, efficiency increases and prices fall as time goes on. The price of Earth’s limited fossil fuels tends to go the other direction.

- Facebook’s Top Open Data Problems (Facebook Research) — even if you’re not interested in Facebook’s Very First World Problems, this is full of factoids like Facebook’s social graph store TAO, for example, provides access to tens of petabytes of data, but answers most queries by checking a single page in a single machine. (via Greg Linden)