This week, we continue looking at search privacy issues and at the ongoing battle between Google, Bing, and Yahoo. Oh, and writing robots — we’ll look at those, too.

Privacy and tracking issues

Searchers don’t often think about privacy, but governments certainly do, and over time, search engines have had to balance gathering as much data as possible to improve search results and concerns about privacy. In 2008, Yahoo was very vocal about their policy of only retaining data for 90 days. Now, they’ve changed that policy. They’ll keep raw search log data for 18 months and “have gone back to the drawing board” regarding other log file data.

Microsoft and Google keep search logs for 18 months and Yahoo may have found that keeping this data for a shorter period of time put them at a competitive disadvantage. In the new book “In the Plex,” Steven Levy talks about how important Google found search data to be early on.

The search behavior of users, captured and encapsulated in the logs that could be analyzed and mined, would make Google the ultimate learning machine … Over the years, Google would make the data in its logs the key to evolving its search engine. It would also use those data on virtually every other product the company would develop.

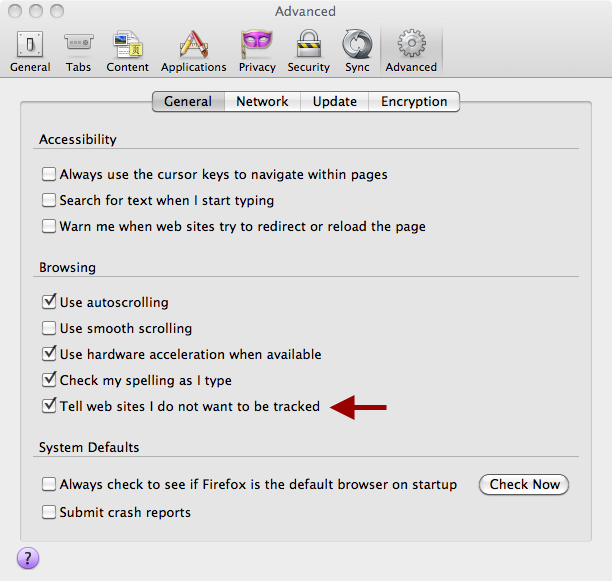

Perhaps that’s why Google hasn’t added the new “do not track” header to Chrome. The data is too valuable to provide encouragement for users to opt out.

Firefox 4 includes a no tracking option. Whether sites choose to accept this is another matter.

Although, as security researcher Christopher Soghoian said to Wired:

“The opt-out cookies and their plug-in are not aimed at consumers. They are aimed at policy makers. Their purpose is to give them something to talk about when they get called in front of Congress. No one is using this plug-in and they don’t expect anyone to use it.”

And as the Wired article notes, the header doesn’t mean much at the moment as companies aren’t using it and legislation doesn’t require them to.

Bing continues to gain search share

Last week, I noted that Bing was slowly gaining search share in the United States. This week, the Bing UK blog said that they are gaining share in the UK as well. Of course, the gain between February and March of 2011 was only .28% and Google is still at 90% share, but hey, Bing will take what they can get.

Yahoo reports revenue declines

On Search Engine Land, Danny Sullivan has a great article digging into the details of Yahoo’s second quarter earnings. Yahoo is blaming the revenue decline on the new partnership with Microsoft, but the article points out that the explanation isn’t as easy as that, and in fact, revenue began declining long before the switch was made.

Can robots write better content than humans?

In recent weeks, Google has been in the news for tweaking its algorithms to better rank sites with unique, high-quality content rather than pages from “content farms.” But in some cases, can machines write higher quality stories than people? A recent NPR story recounts a journalism face off between a robot journalist and a human journalist … and the robot won. Certainly, algorithms are great at data extraction and in some cases, at presenting that data. But we probably don’t want machines to take over the analysis, do we?

Related: