Mike Barlow

At Solid San Francisco, you could see all the way to the future

Making sense of the intersection between connected devices, accessible hardware, and synthetic biology.

Register for Solid Amsterdam, October 28, 2015 — space is limited.

I don’t usually describe conferences as “mind expanding,” but in this instance, the description is justified. At O’Reilly’s Solid 2015: Hardware, Software & the Internet of Things, held recently at Fort Mason in San Francisco, I encountered startling views of the future, thoughtful presentations on creative combinations of exciting new technologies, and warnings about what might happen if somehow it all goes wrong.

Dozens of speakers and presenters covered topics ranging from synthetic biology to augmented reality helmets to 3D printed automobiles. The audience was a mix of software developers, hardware designers, traditional manufacturers, digital manufacturers, academics, entrepreneurs, and venture capitalists keen to spot the next major tech trends.

The conference was organized around multiple tracks and themes, including data, design, software, hardware, product development, manufacturing, biology, security, technology, and startups. What follows is an overview of my key takeaways. Read more…

Building functional teams for the IoT economy

Experts weigh in on more fluid approaches to IoT team building.

Download a free copy of our new report, “When Worlds Collide: Hardware, Software, and Manufacturing Teams for the IoT,” by Mike Barlow. Editor’s note: this post is an excerpt from the report.

You don’t have to be a hardcore dystopian to imagine the problems that can unfold when worlds of software collide with worlds of hardware.When you consider the emerging economics of the Internet of Things (IoT), the challenges grow exponentially, and the complexities are daunting.

For many people, the IoT suggests a marriage of software and hardware. But the economics of the IoT involves more than a simple binary coupling. In addition to software development and hardware design, a viable end-to-end IoT process would include prototyping, sourcing raw materials, manufacturing, operations, marketing, sales, distribution, customer support, finance, legal, and HR.

It’s reasonable to assume that an IoT process would be part of a larger commercial business strategy that also involves multiple sales and distribution channels (e.g., wholesale, retail, value-added reseller, direct); warehousing; operations; and interactions with a host of regulatory agencies at various levels of government, depending on the city, state, region, and country.

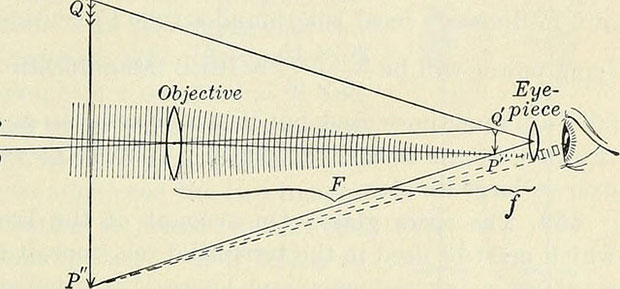

A diagram of a truly functional IoT would look less like a traditional linear supply chain and more like an ecosystem or a network. The most basic supply chain looks something like this: Read more…

Big data’s move to the cloud

A new survey shows the market is ready for cloud-based big data services.

One night when our son was two years old, he abruptly decided that he didn’t like taking baths. As my wife recalls, he struggled mightily against the ritual of bathing for several months until, suddenly and mysteriously, he decided that he liked bathing again. We’re happy to report that he has managed to stay relatively clean ever since.

One night when our son was two years old, he abruptly decided that he didn’t like taking baths. As my wife recalls, he struggled mightily against the ritual of bathing for several months until, suddenly and mysteriously, he decided that he liked bathing again. We’re happy to report that he has managed to stay relatively clean ever since.

When I speak with CIOs and other IT leaders about moving big data operations into the cloud, I am reminded of our son’s unexplained loathing of the bathtub.

Nearly everyone associated with IT understands that most IT operations — including big data analytics — must eventually move into the cloud. The traditional on-premises approaches are simply too costly, and CIOs are under crushing pressure to shift budgetary resources to value-added, customer-facing activities.

For most companies, the writing is already on the wall. The cloud offers greater agility and elasticity, and quicker product development cycles — and can reduce costs. When you add up the benefits, it seems inevitable that the bulk of IT operations will move into the cloud. Nevertheless, the foot-dragging and excuse-making continues. Read more…

Roll-your-own database architecture

Making the case for blended architectures in the rapidly evolving universe of advanced analytics.

Two years ago, most of the conversations around big data had a futuristic, theoretical vibe. That vibe has been replaced with a gritty sense of practically. Today, when big data or some surrogate term arises in conversation, the talk is likely to focus not on “what if,” but on “how do we get it done?” and “what will it cost?”

Real-time big data analytics and the increasing need for applications capable of handling mixed read/write workloads — as well as transactions and analytics on “hot” data — are putting new pressures on traditional data management architectures.

What’s driving the need for change? There are several factors, including a new class of apps for personalizing the Internet, serving dynamic content, and creating rich user experiences. These apps are data driven, which means they essentially feed on deep data analytics. You’ll need a steady supply of activity history, insights, and transactions, plus the ability to combine historical analytics with hot analytics and read/write transactions. Read more…

Extracting value from the IoT

Data from the Internet of Things makes an integrated data strategy vital.

Union Pacific uses infrared and audio sensors placed on its tracks to gauge the state of wheels and bearings as the trains pass by.

The Internet of Things (IoT) is more than a network of smart toasters, refrigerators, and thermostats. For the moment, though, domestic appliances are the most visible aspect of the IoT. But they represent merely the tip of a very large and mostly invisible iceberg.

IDC predicts by the end of 2020, the IoT will encompass 212 billion “things,” including hardware we tend not to think about: compressors, pumps, generators, turbines, blowers, rotary kilns, oil-drilling equipment, conveyer belts, diesel locomotives, and medical imaging scanners, to name a few. Sensors embedded in such machines and devices use the IoT to transmit data on such metrics as vibration, temperature, humidity, wind speed, location, fuel consumption, radiation levels, and hundreds of other variables. Read more…

How to be agile with your big data

Agile methodology brings flexibility to the EDW and offers ways to integrate open-source technologies with existing systems.

Data analysis, like other pursuits, is a balancing act. The rise of big data ratchets up the pressure on the traditional enterprise data warehouse (EDW) and associated software tools to handle rapidly evolving sets of new demands posed by the business. Companies want their EDW systems to be more flexible and more user friendly — without sacrificing processing speeds, data integrity, or overall reliability.

“The more data you give the business, the more questions they will ask,” says José Carlos Eiras, who has served as CIO at Kraft Foods, Philip Morris, General Motors, and DHL. “When you have big data, you have a lot of different questions, and suddenly you need an enterprise data warehouse that is very flexible.”

EDWs are remarkably powerful, but it takes considerable expertise and creativity to modify them on the fly. Adding new capabilities to the EDW generally requires significant investments of time and money. You can develop your own tools internally or purchase them from a vendor, but either way, it’s a hard slog. Read more…

Venal Sins: Cash, Sex, and IT Infrastructure

Yet again, I reveal the base instincts driving my interest in big data. It’s not the science – it’s the cash. And yes, on some level, I find the idea of all that cash sexy. Yes, I know it’s a failing, but I can’t help it. Maybe in my next life I’ll develop a better appreciation of the finer things, and I will begin to understand the real purpose of the universe…

Yet again, I reveal the base instincts driving my interest in big data. It’s not the science – it’s the cash. And yes, on some level, I find the idea of all that cash sexy. Yes, I know it’s a failing, but I can’t help it. Maybe in my next life I’ll develop a better appreciation of the finer things, and I will begin to understand the real purpose of the universe…

Until then, however, I’m happy to write about the odd and interesting intersection of big data and big business. As noted in my newest paper, big data is driving a renaissance in IT infrastructure spending. IDC, for example, estimates that worldwide spending for infrastructure hardware alone (servers, storage, PCs, tablets, and peripherals) will rise from $461 billion in 2013 to $468 billion in 2014. Gartner predicts that total IT spending will grow 3.1% in 2014, reaching $3.8 trillion, and forecasts “consistent four to five percent annual growth through 2017.” For a lot of people, including me, the mere thought of all that additional cash makes IT infrastructure seem sexy again.

Of course, there’s more to the story than networks, servers, and storage devices. But when people ask me, “Is this big data thing real? I mean, is it real???” the easy answer is yes, it must be real because lots of companies are spending real money on it. I don’t know if that’s enough to make IT infrastructure sexy, but it sure makes it a lot more fascinating and – dare I say it, intriguing – than it seemed last year.

In life, sex is the key to survival. In business, cash is king. Is there a connection? Read my paper, and please let me know.

Get your free digital copy of Will Big Data Make IT Infrastructure Sexy Again? — compliments of Syncsort.

CIOs and the Big Data Challenge

The trend is clear: The CIO’s IT budget is getting smaller and the CMO’s IT budget is getting larger. As a result, the CIO’s role is diminishing and the CMO’s role is expanding. From a business perspective, the shift feels inevitable. Despite talk about transforming corporate IT organizations from cost centers into profit centers, the role of the CIO has remained largely administrative.

The trend is clear: The CIO’s IT budget is getting smaller and the CMO’s IT budget is getting larger. As a result, the CIO’s role is diminishing and the CMO’s role is expanding. From a business perspective, the shift feels inevitable. Despite talk about transforming corporate IT organizations from cost centers into profit centers, the role of the CIO has remained largely administrative.

In their hearts, most CIOs know the score. They’ve won their battle to earn “a seat at the table,” but the table has gotten smaller. The main challenges ahead of them are technical, not strategic. Their key areas of focus today are mobility, cloud, and security. They are aware of big data, but it’s just not a survival issue for them.

Big Data Culture Gap: Technology Advancing More Quickly Than People and Processes

Big data tools are evolving more quickly than the people and organizations using them.

Way back in October 2012, mere days before Hurricane Sandy filled our basement with five feet of water, the nice editors at O’Reilly Media asked me to write a white paper on the emerging architecture of big data. That paper was followed by two more, one about the emerging culture of big data, and another about the impact of big data on the traditional IT function.

Despite my attempts to make all three papers seem wildly different, they all share a common theme or subtext, namely that the technology of big data is evolving far more quickly than the people and processes of big data.

In other words, the tools are more advanced than the organizations using them. At least that’s my takeaway. After interviewing dozens of data analysts, industry experts, and senior-level corporate executives, I’m convinced that the technology is advancing faster than the abilities of the people trying to use it.

Big data vs. big reality

It's not the data itself but what you do with it that counts.

This post originally appeared on Cumulus Partners. It’s republished with permission.

Quentin Hardy’s recent post in the Bits blog of The New York Times touched on the gap between representation and reality that is a core element of practically every human enterprise. His post is titled “Why Big Data is Not Truth,” and I recommend it for anyone who feels like joining the phony argument over whether “big data” represents reality better than traditional data.

In a nutshell, this “us” versus “them” approach is like trying to poke a fight between oil painters and water colorists. Neither oil painting nor water colors are “truth”; both are forms of representation. And here’s the important part: Representation is exactly that — a representation or interpretation of someone’s perceived reality. Pitting “big data” against traditional data is like asking you if Rembrandt is more “real” than Gainsborough. Both of them are artists and both painted representations of the world they perceived around them.

The problem with false arguments like the one posed by Hardy is that they obscure the value of data — traditional data and big data — and the impact of data on our culture. I’m now working my way through “Raw Data” is an Oxymoron, an anthology of short essays about data. I recommend it for anyone who is seriously interested in thinking about the many ways in which data has influenced (and continues influencing) our lives. I especially recommend “facts and FACTS: Abolitionists’ Database Innovations,” by Ellen Gruber Garvey. As its title suggests, the essay focuses on what proves to be an absolutely fascinating period of U.S. history in which the anti-slavery movement harvested data from real advertisements in Southern newspapers to paint a vivid and believable picture of the routine horrors inflicted by the slave system on real human beings.

That 19th century use of data mining built support for the anti-slavery movement, both in the U.S. and in England. The data played a key role in making the case for abolishing slavery — even though it required the bloodiest war in U.S. history to make abolition a fact.

Data itself has no quality. It’s what you do with it that counts.