"data journalism" entries

Rapid growth, open data, and the three people every data journalism team needs.

A flurry of articles are published each week purporting to give us a “progress report” on the state of data journalism. This week, Frederic Filloux of the Guardian and former Financial Times Journalist Tom Forensky debate whether the quality of data journalism is improving fast, or not fast enough. No matter which side of the argument you fall on, there’s no doubt that newsrooms are snapping up data journalists at a fast clip. To that end, the Knight Lab offers advice on the three types of people newsrooms should hire in order to build strong data journalism teams.

Your links for the week:

-

Data Journalism is improving – fast (Guardian)

The last Data Journalism Awards established that the genre is getting better, wider in scope and gaining many creative players. - Is it Data Journalism Or Fancy Infographics? Progress Isn’t Fast Enough (Silicon Valley Watcher) The information conveyed is excellent but what does that do towards developing a sustainable business model for quality journalism? If data journalism can get us to that point then we can say it has made great progress but it’s not improving fast enough.

-

Want to build a data journalism team? You’ll need these three people (Knight Lab)

When I started using software to analyze data as a reporter in the late 1980s, “data journalism” ended once my stories were published in the newspaper. Now the publication of the story is just the beginning. The same data can also be turned into compelling visualizations and into news applications that people can use long after the story is published. So data journalism — which was mostly a one-person job when I started doing it — is now a team sport.

Free Tableau for reporters, data journalism award winners, and handling data sets about race

I think we can all agree that “free” is usually a good thing. To that end, investigative journalists got a huge boost this week from Tableau, which has decided to provide journalists with free licenses for their desktop professional software. Also in the links, the Global Editors Network has announced this year’s data-driven journalism award winners. And Matt Waite of the Sarasota Herald-Tribune offers tips for journalists about how to avoid some of the common pitfalls that lead to the collection of bad data. Your links for the week:

-

Tableau Software to Provide Complimentary Software to Journalists (IRE)

“At Tableau we believe that data is an important part of the civic conversation,” said Ellie Fields, Senior Director of Product Marketing. “Journalists have embraced Tableau Public as a way to tell important stories with data, and we want to support them in their work. And while Tableau Public is a fantastic product for making data open, journalists often need to keep their data private while they are developing a story. Providing free licenses to Tableau Desktop Professional will let them do that.” -

6 mistakes newspapers make with data journalism (INMA)

Too much of what is claimed to be “data journalism” in today’s media is really just ego-driven “data porn” — pretty pictures created around numbers with no real reader value, according to an international “data guru” with strong journalism credentials. -

Handling Data about Race and Ethnicity Or, How Matt Waite Got his Butt Kicked (Mozilla Open News)

Race and ethnicity are tricky topics with loads of nuance and definitional difficulties. But they aren’t the only places these issues come up. Anytime you’re comparing data across agencies and across geographies, be on high alert for mismatches.

What journalists can learn from gamers, using ‘citizen sensors’, and best hits of a data pioneer

As the field grows, and the demands for “data journalists” proliferate, journalists find themselves walking a fine line between embracing technology’s potential in the field, and never losing sight of the crucial role of the journalist — which has traditionally been focused on helping people acquire the tools to make sense of information. This week’s links include stories about how journalists and storytellers are adapting the profession for success in this new world of information, where the data tells the story.

Journalism and Technology

-

What News Nerds Can Learn from Game Nerds, Day One (The ProPublica Nerd Blog)

In journalism, we’ve heard over and over again that mobile is the future. So what kind of storytelling can we do to take advantage of the fact that if they’re on their smartphone we know our readers’ physical location, and that with the right inspiration, they are willing to move great distances? What if on election day, we could help voters find their most convenient polling locations? -

The danger of journalism that moves too quickly beyond fact (Poynter)

The best thinking about journalism’s future benefits from its being in touch with technology’s potential. But it can get in its own way when it simplifies and repudiates the intelligence of journalism’s past. Machines bring the capacity to count. Citizens bring expertise, experience and an expanded capacity to observe events from more vantage points. Journalists bring access, the ability to interrogate people in power, to dig, to translate and triangulate incoming information, and a traditional discipline of an open-minded pursuit of truth. They work best in concert. -

A pioneer retraces the data trail (The Age)

Author Simon Rogers founded the Datablog in early 2009 and oversaw it until May 2013 when he became Data Editor at Twitter. This book is a “best hits” compilation, a primer for data journalists and a compendium of weird and wonderful facts.

Data Journalists Gather, Transparency, and Data Viz

Notes and links from the data journalism beat

Data journalism is becoming a truly global practice. Data journalists from the UK, China, and the US are sharing data-oriented best practices, insights, and tools. Journalists in Latin America are meeting this week to push for more transparency and access to data in the region. At the same time, recent revelations about NSA domestic surveillance programs have pushed big data stories to the front pages of US papers. Here are a few links from the past week:

Transparency…or Lack Thereof

-

OpenData Latinoamérica: Driving the demand side of data and scraping towards transparency (Neiman Journalism Lab)

“There’s a saying here, and I’ll translate, because it’s very much how we work,” Miguel Paz said to me over a Skype call from Chile. “But that doesn’t mean that it’s illegal. Here, it’s ‘It’s better to ask forgiveness than to ask permission.” Paz is a veteran of the digital news business. The saying has to do with his approach to scraping public data from governments that may be slow to share it. -

The real story in the NSA scandal is the collapse of journalism (zdnet.com)

On Thursday, June 6, the Washington Post published a bombshell of a story, alleging that nine giants of the tech industry had “knowingly participated” in a widespread program by the United States National Security Agency (NSA). One day later, with no acknowledgment except for a change in the timestamp, the Post revised the story, backing down from sensational claims it made originally. But the damage was already done. -

We are shocked, shocked… (davidsimon.com)

Having labored as a police reporter in the days before the Patriot Act, I can assure all there has always been a stage before the wiretap, a preliminary process involving the capture, retention and analysis of raw data. It has been so for decades now in this country. The only thing new here, from a legal standpoint, is the scale on which the FBI and NSA are apparently attempting to cull anti-terrorism leads from that data. But the legal and moral principles? Same old stuff. -

Big Data Has Big Stage at Personal Democracy Forum (pbs.org)

Engaging News Project’s Talia Stroud tackled the issue of public engagement in news organizations. Polls on websites don’t yield scientifically accurate results, nor do they get people to address difficult issues, she said. “These data are junk. We know they’re junk,” Stroud said. “City council representatives know they’re junk. Even news organizations know that the results of these data are junk. The only reason that this poll is being included on the news organization’s site is to increase interactivity and increase your time on page.”

Strata Week: Movers and shakers on the data journalism front

Reuters' Connected China, accessing Pew's datasets, Simon Rogers' move to Twitter, data privacy solutions, and Intel's shift away from chips.

Reuters launches Connected China, Pew instructs on downloading its data, and Twitter gets a data editor

Yue Qiu and Wenxiong Zhang took a look this week at a data journalism effort by Reuters, the Connected China visualization application. Qiu and Zhang report that “[o]ver the course of about 18 months, a dozen bilingual reporters based in Hong Kong dug into government websites, government reports, policy papers, Mainland major publications, English news reporting, academic texts, and think-tank reports to build up the database.”

Finding and telling data-driven stories in billions of tweets

Twitter has hired Guardian Data editor Simon Rogers as its first data editor.

Twitter has hired its first data editor. Simon Rogers, one of the leading practitioners of data journalism in the world, will join Twitter in May. He will be moving his family from London to San Francisco and applying his skills to telling data-driven stories using tweets. James Ball will replace him as the Guardian’s new data editor.

As a data editor, will Rogers keep editing and producing something that we’ll recognize as journalism? Will his work at Twitter be different than what Google Think or Facebook Stories delivers? Different in terms of how he tells stories with data? Or is the difference that Twitter has a lot more revenue coming in or sees data-driven storytelling as core to driving more business? (Rogers wouldn’t comment on those counts.)

Sprinting toward the future of Jamaica

Open data is fundamental to democratic governance and development, say Jamaican officials and academics.

Creating the conditions for startups to form is now a policy imperative for governments around the world, as Julian Jay Robinson, minister of state in Jamaica’s Ministry of Science, Technology, Energy and Mining, reminded the attendees at the “Developing the Caribbean” conference last week in Kingston, Jamaica.

Robinson said Jamaica is working on deploying wireless broadband access, securing networks and stimulating tech entrepreneurship around the island, a set of priorities that would have sounded of the moment in Washington, Paris, Hong Kong or Bangalore. He also described open access and open data as fundamental parts of democratic governance, explicitly aligning the release of public data with economic development and anti-corruption efforts. Robinson also pledged to help ensure that Jamaica’s open data efforts would be successful, offering a key ally within government to members of civil society.

The interest in adding technical ability and capacity around the Caribbean was sparked by other efforts around the world, particularly Kenya’s open government data efforts. That’s what led the organizers to invite Paul Kukubo to speak about Kenya’s experience, which Robinson noted might be more relevant to Jamaica than that of the global north. Read more…

Sensoring the news

Sensor journalism will augment our ability to understand the world and hold governments accountable.

When I went to the 2013 SXSW Interactive Festival to host a conversation with NPR’s Javaun Moradi about sensors, society and the media, I thought we would be talking about the future of data journalism. By the time I left the event, I’d learned that sensor journalism had long since arrived and been applied. Today, inexpensive, easy-to-use open source hardware is making it easier for media outlets to create data themselves.

“Interest in sensor data has grown dramatically over the last year,” said Moradi. “Groups are experimenting in the areas of environmental monitoring, journalism, human rights activism, and civic accountability.” His post on what sensor networks mean for journalism sparked our collaboration after we connected in December 2011 about how data was being used in the media.

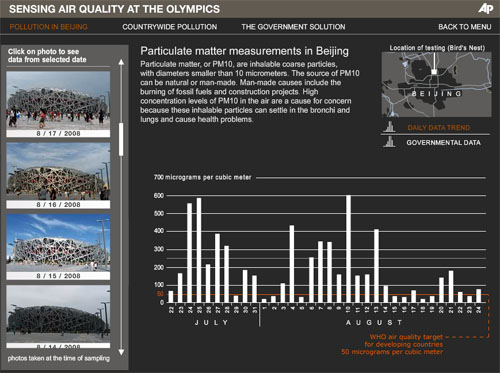

Associated Press visualization of Beijing air quality. See related feature.

At a SXSW panel on “sensoring the news,” Sarah Williams, an assistant professor at MIT, described how the Spatial Information Design Lab At Columbia University* had partnered with the Associated Press to independently measure air quality in Beijing.

Prior to the 2008 Olympics, the coaches of the Olympic teams had expressed serious concern about the impact of air pollution on the athletes. That, in turn, put pressure on the Chinese government to take substantive steps to improve those conditions. While the Chinese government released an index of air quality, explained Williams, they didn’t explain what went into it, nor did they provide the raw data.

The Beijing Air Tracks project arose from the need to determine what the conditions on the ground really were. AP reporters carried sensors connected to their cellphones to detect particulate and carbon monoxide levels, enabling them to report air quality conditions back in real-time as they moved around the Olympic venues and city. Read more…

Visualization of the Week: Sequester cuts by state

Data journalist Ryan Murphy dug into the White House sequester cut data to create a visualization of the economic impact of the sequester.

The sequester went into effect in the U.S. on Friday, and media outlets are busy fleshing out practical consequences and looking for solutions. Ryan Murphy at The Texas Tribune dug into the state-level data released by the White House (in PDF files, no less), converted it into a more user-friendly format and created an interactive visualization detailing the economic effect of the sequester cuts on each state in nine categories.

Looking at the many faces and forms of data journalism

Leading experts on data-driven storytelling came together in our recent Google+ Hangout.

Over the past year, I’ve been investigating data journalism. In that work, I’ve found no better source for understanding the who, where, what, how and why of what’s happening in this area than the journalists who are using and even building the tools needed to make sense of the exabyte age. Yesterday, I hosted a Google Hangout with several notable practitioners of data journalism. Video of the discussion is embedded below:

Over the course of the discussion, we talked about what data journalism is, how journalists are using it, the importance of storytelling, ethics, the role of open source and “showing your work” and much more.