"data analytics" entries

Is 2016 the year you let robots manage your money?

The O’Reilly Data Show podcast: Vasant Dhar on the race to build “big data machines” in financial investing.

Subscribe to the O’Reilly Data Show Podcast to explore the opportunities and techniques driving big data and data science.

In this episode of the O’Reilly Data Show, I sat down with Vasant Dhar, a professor at the Stern School of Business and Center for Data Science at NYU, founder of SCT Capital Management, and editor-in-chief of the Big Data Journal (full disclosure: I’m a member of the editorial board). We talked about the early days of AI and data mining, and recent applications of data science to financial investing and other domains.

Dhar’s first steps in applying machine learning to finance

I joke with people, I say, ‘When I first started looking at finance, the only thing I knew was that prices go up and down.’ It was only when I actually went to Morgan Stanley and took time off from academia that I learned about finance and financial markets. … What I really did in that initial experiment is I took all the trades, I appended them with information about the state of the market at the time, and then I cranked it through a genetic algorithm and a tree induction algorithm. … When I took it to the meeting, it generated a lot of really interesting discussion. … Of course, it took several months before we actually finally found the reasons for why I was observing what I was observing.

Patrick Wendell on Spark’s roadmap, Spark R API, and deep learning on the horizon

The O'Reilly Radar Podcast: A special holiday cross-over of the O'Reilly Data Show Podcast.

Subscribe to the O’Reilly Radar Podcast to track the technologies and people that will shape our world in the years to come.

In this special holiday episode of the Radar Podcast, we’re featuring a cross-over of the O’Reilly Data Show Podcast, which you can find on iTunes, Stitcher, TuneIn, or SoundCloud. O’Reilly’s Ben Lorica hosts that podcast, and in this episode, he chats with Apache Spark release manager and Databricks co-founder Patrick Wendell about the roadmap of Spark and where it’s headed, and interesting applications he’s seeing in the growing Spark ecosystem.

Here are some highlights from their chat:

We were really trying to solve research problems, so we were trying to work with the early users of Spark, getting feedback on what issues it had and what types of problems they were trying to solve with Spark, and then use that to influence the roadmap. It was definitely a more informal process, but from the very beginning, we were expressly user driven in the way we thought about building Spark, which is quite different than a lot of other open source projects. … From the beginning, we were focused on empowering other people and building platforms for other developers.

One of the early users was Conviva, a company that does analytics for real-time video distribution. They were a very early user of Spark, they continue to use it today, and a lot of their feedback was incorporated into our roadmap, especially around the types of APIs they wanted to have that would make data processing really simple for them, and of course, performance was a big issue for them very early on because in the business of optimizing real-time video streams, you want to be able to react really quickly when conditions change. … Early on, things like latency and performance were pretty important.

Building a scalable platform for streaming updates and analytics

The O’Reilly Data Show podcast: Evan Chan on the early days of Spark+Cassandra, FiloDB, and cloud computing.

Subscribe to the O’Reilly Data Show Podcast to explore the opportunities and techniques driving big data and data science.

In this episode of the O’Reilly Data Show, I sit down with Evan Chan, distinguished engineer at Tuplejump. We talk about the early days of Spark (particularly his contributions to Spark/Cassandra integration), his interesting new open source project (FiloDB), and recent trends in cloud computing.

Bringing Apache Spark & Apache Cassandra together

Datastax credits me with inspiring them to bring Spark into Cassandra … I think they’re very generous about that. I think I was one of the first folks to talk about the possibility of bringing Cassandra and Spark together. The vision that I saw was that Cassandra was really good for real-time updates, but what if we’re able to do more analytical queries on it? Then you could combine, basically, a platform that is really good for real-time updates with analytics.

Pattern recognition and sports data

The O'Reilly Data Show Podcast: Award-winning journalist David Epstein on the (data) science of sports.

Sign-up now to receive a free download of the new O’Reilly report “Data Analytics in Sports: How Playing with Data Transforms the Game” when it publishes this fall.

Julien Vervaecke and Maurice Geldhof smoking a cigarette at the 1927 Tour de France. Public domain photo via Wikimedia Commons.

In a recent episode of the O’Reilly Data Show Podcast, I spoke with Epstein about his book, data science and sports, and his recent series of articles detailing suspicious practices at one of the world’s premier track and field training programs (the Oregon Project).

Nature/nurture and hardware/software

Epstein’s book contains examples of sports where athletes with certain physical attributes start off with an advantage. In relation to that, we discussed feature selection and feature engineering — the relative importance of factors like training methods, technique, genes, equipment, and diet — topics which Epstein has written about and studied extensively:

One of the most important findings in sports genetics is that your ability to improve with respect to a certain training program is mediated by your genes, so it’s really important to find the kind of training program that’s best tailored to your physiology. … The skills it takes for team sports, these perceptual skills, nobody is born with those. Those are completely software, to use the computer analogy. But it turns out that once the software is downloaded, it’s like a computer. While your hardware doesn’t do anything alone without software, once you’ve got the software, the hardware actually makes a lot of a difference in how good of an operating machine you have. It can be obscured when people don’t study it correctly, which is why I took on some of the 10,000 hours stuff. Read more…

Real-time, not batch-time, analytics with Hadoop

How big data, fast data, and real-time analytics work together in the real world.

Attend the VoltDB webcast on June 24, 2015 with John Hugg to learn more on how to build a fast data front-end to Hadoop.

Today, we often hear the phrase “The 3 Vs” in relation to big data: Volume, Variety and Velocity. With the interest and popularity of big data frameworks such as Hadoop, the focus has mostly centered on volume and data at rest. Common requirements here would be data ingestion, batch processing, and distributed queries. These are well understood. Increasingly, however, there is a need to manage and process data as it arrives, in real time. There may be great value in the immediacy of that data and the ability to act upon it very quickly. This is velocity and data in motion, also known as “fast data.” Fast data has become increasingly important within the past few years due to the growth in endpoints that now stream data in real time.

Big data + fast data is a powerful combination. However, adding real-time analytics to this mix provides the business value. Let’s look at a real example, originally described by Scott Jarr of VoltDB.

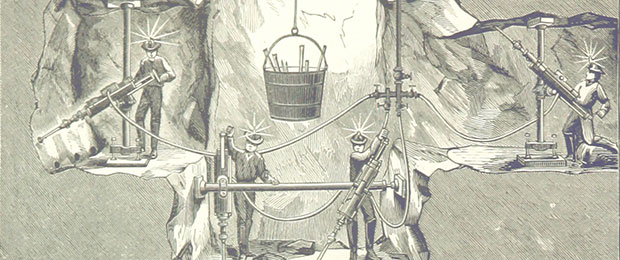

Consider a company that builds systems to manage physical assets in precious metal mines. Inside a mine, there are sensors on miners as well as shovels and other assets. For a lost shovel, minutes or hours of reporting latency may be acceptable. However, a sensor on a miner indicating a stopped heart should require immediate attention. The system should, therefore, be able to receive very fast data. Read more…

Commodity data analytics for health care

Predixion service could signal a trend for smaller health facilities.

Analytics are expensive and labor intensive; we need them to be routine and ubiquitous. I complained earlier this year that analytics are hard for health care providers to muster because there’s a shortage of analysts and because every data-driven decision takes huge expertise.

Currently, only major health care institutions such as Geisinger, the Mayo Clinic, and Kaiser Permanente incorporate analytics into day-to-day decisions. Research facilities employ analytics teams for clinical research, but perhaps not so much for day-to-day operations. Large health care providers can afford departments of analysts, but most facilities — including those forming accountable care organizations — cannot.

Imagine that you are running a large hospital and are awake nights worrying about the Medicare penalty for readmitting patients within 30 days of their discharge. Now imagine you have access to analytics that can identify about 40 measures that combine to predict a readmission, and a convenient interface is available to tell clinicians in a simple way which patients are most at risk of readmission. Better still, the interface suggests specific interventions to reduce readmissions risk: giving the patient a 30-day supply of medication, arranging transportation to rehab appointments, etc. Read more…

What the data world can learn from the fashion industry

How generating conversations can become one of the most important data assets for any organization.

At O’Reilly Research, we focus our attention on trends in technology adoption — which tools are adopted and in which industries. In doing so, we uncover interesting cross-disciplinary opportunities and discover what we can learn from innovations in other fields.

At O’Reilly Research, we focus our attention on trends in technology adoption — which tools are adopted and in which industries. In doing so, we uncover interesting cross-disciplinary opportunities and discover what we can learn from innovations in other fields.

We’ve recently learned about the increasing role of data in the fashion industry, so we set out to uncover some of the players who are making disruptive changes using technology and analytics.

Our team asked Liza Kindred, founder of Third Wave Fashion, and Julie Steele, coauthor of Beautiful Visualization and Designing Data Visualizations, to take a closer look at these developments in their new report, “Fashioning Data: How fashion industry leaders innovate with data and what you can learn from what they know.” We think you’ll find some surprising applications of data and analytics in the fashion industry — applications that are useful regardless of the industry or organization you work within. And, we know we’re just at the beginning of what is likely a growing trend. Read more…

Predictive data analytics is saving lives and taxpayer dollars in New York City

Michael Flowers explains why applying data science to regulatory data is necessary to use city resources better.

A predictive data analytics team in the Mayor's Office of New York City has been quietly using data science to find patterns in regulatory data that can then be applied to law enforcement, public safety, public health and better allocation of taxpayer resources.