- Low-Power Deep Learning — it’s a media release for proprietary tech, but interesting that people are working on low-power deep learning neural nets. As Pete Warden noted, this kind of research will be at the center of smart sensors. (via Pete Warden)

- Tesla’s Self-Improving Autopilot — it learns when you “rescue” (aka take control back from autopilot), so it’s getting better day by day. Musk said that Model S owners could add ~1 million miles of new data every day, which is helping the company create “high-precision maps.” Navteq, Google Maps, Waze … new map data is still valuable.

- The Digital Revolution in Higher Education Has Already Happened (Clay Shirky) — and no-one noticed. I read half of this before going “holy crap this is good, who wrote it?” I’m a Shirky junkie (I bet his laundry lists cite Habermas and the Peace of Westphalia). At the current rate of growth, half the country’s undergraduates will have at least one online class on their transcripts by the end of the decade. This is the new normal. But, As long as we discuss online education as a pedagogic revolution rather than an organizational one, we aren’t even having the right kind of conversation. The dramatic adoption of online education is not mainly a change in the content of classes. It’s a change in the institutional form of college, a demand for more flexibility by students who have to manage the increasingly complicated triangle of work, family, and school.

- System Automatically Converts 2-D to 3-D (MIT) — hilarious strategy! They constrained their domain: broadcast soccer games. The MIT and QCRI researchers essentially ran this process in reverse. They set the very realistic Microsoft soccer game “FIFA13” to play over and over again, and used Microsoft’s video-game analysis tool PIX to continuously store screen shots of the action. For each screen shot, they also extracted the corresponding 3-D map. […] For every frame of 2-D video of an actual soccer game, the system looks for the 10 or so screen shots in the database that best correspond to it. Then it decomposes all those images, looking for the best matches between smaller regions of the video feed and smaller regions of the screen shots. Once it’s found those matches, it superimposes the depth information from the screen shots on the corresponding sections of the video feed. Finally, it stitches the pieces back together. Brute-forcing soccer. Ok, perhaps “hilarious” for a certain type of person. I am that person.

"sensor networks" entries

Four short links: 9 November 2015

Smart Sensors, Learning Autopilot, Higher Education, and 3D Soccer

Four short links: 17 August 2015

Fix the Future, Hack a Dash, Inside a $50 Smartphone, and Smartphone Sensors

- Women in Science Fiction Bundle — pay-what-you-want bundle of SF written by women. SF shapes invention, but it’s often a future filled with square-jawed men and chiseled Space Desperados, with women relegated to incidental roles. And lo, the sci-tech industry evolved brogrammers. This bundle is a good start toward a cure. Dare to imagine a future where women are people, too. (via Cory Doctorow)

- How I Hacked Amazon’s $5 WiFi Button to track Baby Data (Ed Benson) — Dash Buttons are small $5 plastic buttons with a battery and a WiFi connection inside. I’m going to show you how to hijack and use these buttons for just about anything you want. (via Flowing Data)

- The Realities of a $50 Smartphone (Engadget) — it can be done, but it literally won’t be pretty. If this thought experiment has revealed anything, it’s that there’s no such thing as a profit in the Android world any more.

- The Pocket Lab — a wireless sensor for smartphones that measures acceleration, force, angular velocity, magnetic field, pressure, altitude, and temperature.

Governments can bridge costs and services gaps with sensor networks

Government sensor networks can streamline processes, cut labor costs, and improve services.

Contributing authors: Andre Bierzynski and Kevin Chrapaty.

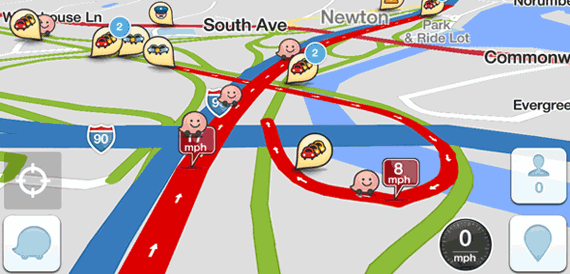

What if government agencies followed in the footsteps of Waze, a community-driven mobile phone app that collects location data through GPS and allows its users to report accidents and traffic jams, providing real-time, location-specific traffic alerts?

It’s not news to anyone who works in government that we live in a time of ever-tighter budgets and ever-increasing needs. The 2013 federal shutdown only highlighted this precarious situation: government finds it increasingly difficult to summon the resources and manpower needed to meet its current responsibilities, yet faces new ones after each Congressional session.

Sensor networks are an important emerging technology that some areas of government already are implementing to bridge the widening gap between the demand to reduce costs and the demand to improve services. The Department of Defense, for instance, uses RFID chips to monitor its supply chain more accurately, while the U.S. Geological Survey employs sensors to remotely monitor the bacterial levels of rivers and lakes in real time. Additionally, the General Services Administration has begun using sensors to measure and verify the energy efficiency of “green” buildings (PDF), and the Department of Transportation relies on sensors to monitor traffic and control traffic signals and roadways. All of which is productive, but more needs to be done. Read more…

#IoTH: The Internet of Things and Humans

The IoT requires thinking about how humans and things cooperate differently when things get smarter.

Rod Smith of IBM and I had a call the other day to prepare for our onstage conversation at O’Reilly’s upcoming Solid Conference, and I was surprised to find how much we were in agreement about one idea: so many of the most interesting applications of the Internet of Things involve new ways of thinking about how humans and things cooperate differently when the things get smarter. It really ought to be called the Internet of Things and Humans — #IoTH, not just #IoT!

Let’s start by understanding the Internet of Things as the combination of sensors, a network, and actuators. The “wow” factor — the magic that makes us call it an Internet of Things application — can come from creatively amping up the power of any of the three elements.

For example, a traditional “dumb” thermostat consists of only a sensor and an actuator — when the temperature goes out of the desired range, the heat or air conditioning goes on. The addition of a network, the ability to control your thermostat from your smartphone, say, turns it into a simple #IoT device. But that’s the bare-base case. Consider the Nest thermostat: where it stands out from the crowd of connected thermostats is that it uses a complex of sensors (temperature, moisture, light, and motion) as well as both onboard and cloud software to provide a rich and beautiful UI with a great deal more intelligence. Read more…

Four short links: 10 April 2014

Rise of the Patent Troll, Farm Data, The Block Chain, and Better Writing

- Rise of the Patent Troll: Everything is a Remix (YouTube) — primer on patent trolls, in language anyone can follow. Part of the fixpatents.org campaign. (via BoingBoing)

- Petabytes of Field Data (GigaOm) — Farm Intelligence using sensors and computer vision to generate data for better farm decision making.

- Bullish on Blockchain (Fred Wilson) — our 2014 fund will be built during the blockchain cycle. “The blockchain” is bitcoin’s distributed consensus system, interesting because it’s the return of p2p from the Chasm of Ridicule or whatever the Gartner Trite Cycle calls the time between first investment bubble and second investment bubble under another name.

- Hemingway — online writing tool to help you make your writing clear and direct. (via Nina Simon)

Four short links: 31 March 2014

Game Patterns, What Next, GPU vs CPU, and Privacy with Sensors

- Game Programming Patterns — a book in progress.

- Search for the Next Platform (Fred Wilson) — Mobile is now the last thing. And all of these big tech companies are looking for the next thing to make sure they don’t miss it.. And they will pay real money (to you and me) for a call option on the next thing.

- Debunking the 100X GPU vs. CPU Myth — in Pete Warden’s words, “in a lot of real applications any speed gains on the computation side are swamped by the time it takes to transfer data to and from the graphics card.”

- Privacy in Sensor-Driven Human Data Collection (PDF) — see especially the section “Attacks Against Privacy”. More generally, it is often the case the data released by researches is not the source of privacy issues, but the unexpected inferences that can be drawn from it. (via Pete Warden)

Four short links: 28 March 2014

Javascript on Glass, Smart Lights, Hardware Startups, MySQL at Scale

- WearScript — open source project putting Javascript on Glass. See story on it. (via Slashdot)

- Mining the World’s Data by Selling Street Lights and Farm Drones (Quartz) — Depending on what kinds of sensors the light’s owners choose to install, Sensity’s fixtures can track everything from how much power the lights themselves are consuming to movement under the post, ambient light, and temperature. More sophisticated sensors can measure pollution levels, radiation, and particulate matter (for air quality levels). The fixtures can also support sound or video recording. Bring these lights onto city streets and you could isolate the precise location of a gunshot within seconds.

- An Investor’s Guide to Hardware Startups — good to know if you’re thinking of joining one, too.

- WebScaleSQL — a MySQL downstream patchset built for “large scale” (aka Google, Facebook type loads).

iBeacon basics

Proximity is the 'Hello World' of mobility.

As any programmer knows, writing the “hello, world” program is the canonical elementary exercise in any new programming language. Getting devices to interact with the world is the foundation of the Internet of Things, and enabling devices to learn about their surroundings is the “hello world” of mobility.

On a recent trip to Washington D.C., I attended the first DC iBeacon Meetup. iBeacons are exciting. Retailers are revolutionizing shopping by applying new indoor proximity technologies and developing the physical world analog of the data that a web-based retailer like Amazon can routinely collect. A few days ago, I tweeted about an analysis of the beacon market, which noted that “[beacons] are poised to transform how retailers, event organizers, transit systems, enterprises, and educational institutions communicate with people indoors” — and could even be used in home automation systems.

I got to see the ground floor of the disruption in action at the meetup in DC, which featured presentations by a few notable local companies, including Radius Networks, the developer of the CES scavenger hunt app for iOS. When I first heard of the app, I almost bought a ticket to Las Vegas to experience the app for myself, so it was something of a cool moment to hear about the technology from the developer of an application that I’d admired from afar.

After the presentations, I had a chance to talk with David Helms of Radius. Helms was drawn to work at Radius for the same reason I was compelled to attend the iBeacon meetup. As he put it, “The first step in extending the mobile computing experience beyond the confines of that slab of glass in your pocket is when it can recognize the world around it and interact with it, and proximity is the ‘Hello’ of the Internet of Things revolution.” Read more…

Four short links: 16 July 2013

Sensor Networks, Programming Silliness, Higher Order C, and Meeting Silliness

- Pete Warden on Sensors — We’re all carrying little networked laboratories in our pockets. You see a photo. I see millions of light-sensor readings at an exact coordinate on the earth’s surface with a time resolution down to the millisecond. The future is combining all these signals into new ways of understanding the world, like this real-time stream of atmospheric measurements.

- Quine Relay — This is a Ruby program that generates Scala program that generates Scheme program that generates …(through 50 languages)… REXX program that generates the original Ruby code again.

- Cello — a GNU99 C library which brings higher level programming to C. Interfaces allow for structured design, Duck Typing allows for generic functions, Exceptions control error handling, Constructors/Destructors aid memory management, Syntactic Sugar increases readability.

- The Meeting (John Birmingham) — satirising the Wall Street Journal’s meeting checklist advice.

Four short links: 10 April 2013

Street View Tiles Hacks, Policy Simulation, Map Tile Toolbox, and Connected Sensor Device HowTo

- HyperLapse — this won the Internet for April. Everyone else can go home. Check out this unbelievable video and source is available.

- Housing Simulator — NZ’s largest city is consulting on its growth plan, and includes a simulator so you can decide where the growth to house the hundreds of thousands of predicted residents will come from. Reminds me of NPR’s Budget Hero. Notice that none of the levers control immigration or city taxes to make different cities attractive or unattractive. Growth is a given and you’re left trying to figure out which green fields to pave.

- Converting To and From Google Map Tile Coordinates in PostGIS (Pete Warden) — Google Maps’ system of power-of-two tiles has become a defacto standard, widely used by all sorts of web mapping software. I’ve found it handy to use as a caching scheme for our data, but the PostGIS calls to use it were getting pretty messy, so I wrapped them up in a few functions. Code on github.

- So You Want to Build A Connected Sensor Device? (Google Doc) — The purpose of this document is to provide an overview of infrastructure, options, and tradeoffs for the parts of the data ecosystem that deal with generating, storing, transmitting, and sharing data. In addition to providing an overview, the goal is to learn what the pain points are, so we can address them. This is a collaborative document drafted for the purpose of discussion and contribution at Sensored Meetup #10. (via Rachel Kalmar)