Use code DATA50 to get 50% off of the new early release of “Fundamentals of Deep Learning: Designing Next-Generation Artificial Intelligence Algorithms.” Editor’s note: This is an excerpt of “Fundamentals of Deep Learning,” by Nikhil Buduma.

The brain is the most incredible organ in the human body. It dictates the way we perceive every sight, sound, smell, taste, and touch. It enables us to store memories, experience emotions, and even dream. Without it, we would be primitive organisms, incapable of anything other than the simplest of reflexes. The brain is, inherently, what makes us intelligent.

The infant brain only weighs a single pound, but somehow, it solves problems that even our biggest, most powerful supercomputers find impossible. Within a matter of days after birth, infants can recognize the faces of their parents, discern discrete objects from their backgrounds, and even tell apart voices. Within a year, they’ve already developed an intuition for natural physics, can track objects even when they become partially or completely blocked, and can associate sounds with specific meanings. And by early childhood, they have a sophisticated understanding of grammar and thousands of words in their vocabularies.

For decades, we’ve dreamed of building intelligent machines with brains like ours — robotic assistants to clean our homes, cars that drive themselves, microscopes that automatically detect diseases. But building these artificially intelligent machines requires us to solve some of the most complex computational problems we have ever grappled with, problems that our brains can already solve in a manner of microseconds. To tackle these problems, we’ll have to develop a radically different way of programming a computer using techniques largely developed over the past decade. This is an extremely active field of artificial computer intelligence often referred to as deep learning.

The limits of traditional computer programs

Why exactly are certain problems so difficult for computers to solve? Well it turns out, traditional computer programs are designed to be very good at two things: 1) performing arithmetic really fast and 2) explicitly following a list of instructions. So, if you want to do some heavy financial number crunching, you’re in luck. Traditional computer programs can do just the trick. But let’s say we want to do something slightly more interesting, like write a program to automatically read someone’s handwriting.

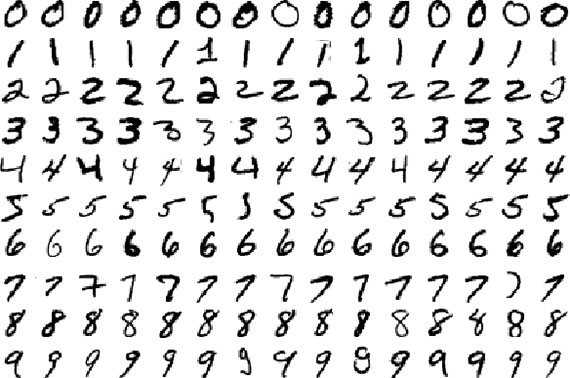

Figure 1-1.

Although every digit in Figure 1-1, above, is written in a slightly different way, we can easily recognize every digit in the first row as a zero, every digit in the second row as a one, etc. Let’s try to write a computer program to crack this task. What rules could we use to tell one digit from another?

Well, we can start simple! For example, we might state that we have a zero if our image only has a single closed loop. All the examples in Figure 1-1 seem to fit this bill, but this isn’t really a sufficient condition. What if someone doesn’t perfectly close the loop on their zero? And, as in Figure 1-2, left, how do you distinguish a messy zero from a six?

Figure 1-2.

There are many other classes of problems that fall into this same category: object recognition, speech comprehension, automated translation, etc. We don’t know what program to write because we don’t know how it’s done by our brains. And even if we did know how to do it, the program might be horrendously complicated.

The mechanics of machine learning

To tackle these classes of problems, we’ll have to use a very different kind of approach. A lot of the things we learn in school growing up have a lot in common with traditional computer programs. We learn how to multiply numbers, solve equations, and take derivatives by internalizing a set of instructions. But the things we learn at an extremely early age, the things we find most natural, are learned by example, not by formula.

For example, when we were two years old, our parents didn’t teach us how to recognize a dog by measuring the shape of its nose or the contours of its body. We learned to recognize a dog by being shown multiple examples and being corrected when we made the wrong guess. In other words, when we were born, our brains provided us with a model that described how we would be able to see the world. As we grew up, that model would take in our sensory inputs and make a guess about what we’re experiencing. If that guess was confirmed by our parents, our model would be reinforced. If our parents said we were wrong, we’d modify our model to incorporate this new information. Over our lifetimes, our model becomes more and more accurate as we assimilate billions of examples. Obviously, all of this happens subconsciously, without us even realizing it, but we can use this to our advantage nonetheless.

Deep learning is a subset of a more general field of artificial intelligence called machine learning, which is predicated on this idea of learning from example. In machine learning, instead of teaching a computer a massive list of rules to solve the problem, we give it a model with which it can evaluate examples and a small set of instructions to modify the model when it makes a mistake. We expect that, over time, a well-suited model would be able to solve the problem extremely accurately.

To learn more, buy the early release of “Fundamentals of Deep Learning,” by Nikhil Buduma.

Cropped public domain image on article and category pages via Pixabay.