"Big Data and Artificial Intelligence: Intelligence Matters" entries

We make the software, you make the robots

An interview with Andreas Mueller, on scikit-learn and usable machine learning software.

Superpixels example from Andreas Mueller’s thesis paper (PDF), used with permission.

Mueller wears many hats at work. He is one of the key maintainers of the popular Python machine learning library scikit-learn. Holding a doctorate in computer vision from the University of Bonn in Germany, he currently works on open science at New York University’s Center for Data Science. He speaks at conferences around the world and has a fanbase of 5,000+ followers on Twitter and about as many reputation points on Stack Overflow. In other words, this man has got mad street cred. He started out doing pure math in academia, and has now achieved software developer cult idol status. Read more…

Understanding neural function and virtual reality

The O'Reilly Data Show Podcast: Poppy Crum explains that what matters is efficiency in identifying and emphasizing relevant data.

Like many data scientists, I’m excited about advances in large-scale machine learning, particularly recent success stories in computer vision and speech recognition. But I’m also cognizant of the fact that press coverage tends to inflate what current systems can do, and their similarities to how the brain works.

During the latest episode of the O’Reilly Data Show Podcast, I had a chance to speak with Poppy Crum, a neuroscientist who gave a well-received keynote at Strata + Hadoop World in San Jose. She leads a research group at Dolby Labs and teaches a popular course at Stanford on Neuroplasticity in Musical Gaming. I wanted to get her take on AI and virtual reality systems, and hear about her experience building a team of researchers from diverse disciplines.

Understanding neural function

While it can sometimes be nice to mimic nature, in the case of the brain, machine learning researchers recognize that understanding and identifying the essential neural processes is much more critical. A related example cited by machine learning researchers is flight: wing flapping and feathers aren’t critical, but an understanding of physics and aerodynamics is essential.

Crum and other neuroscience researchers express the same sentiment. She points out that a more meaningful goal should be to “extract and integrate relevant neural processing strategies when applicable, but also identify where there may be opportunities to be more efficient.”

The goal in technology shouldn’t be to build algorithms that mimic neural function. Rather, it’s to understand neural function. … The brain is basically, in many cases, a Rube Goldberg machine. We’ve got this limited set of evolutionary building blocks that we are able to use to get to a sort of very complex end state. We need to be able to extract when that’s relevant and integrate relevant neural processing strategies when it’s applicable. We also want to be able to identify that there are opportunities to be more efficient and more relevant. I think of it as table manners. You have to know all the rules before you can break them. That’s the big difference between being really cool or being a complete heathen. The same thing kind of exists in this area. How we get to the end state, we may be able to compromise, but we absolutely need to be thinking about what matters in neural function for perception. From my world, where we can’t compromise is on the output. I really feel like we need a lot more work in this area. Read more…

Data has a shape

Using topology to uncover the shape of your data: An interview with Gurjeet Singh.

Get notified when our free report, “Future of Machine Intelligence: Perspectives from Leading Practitioners,” is available for download. The following interview is one of many that will be included in the report.

As part of our ongoing series of interviews surveying the frontiers of machine intelligence, I recently interviewed Gurjeet Singh. Singh is CEO and co-founder of Ayasdi, a company that leverages machine intelligence software to automate and accelerate discovery of data insights. Author of numerous patents and publications in top mathematics and computer science journals, Singh has developed key mathematical and machine learning algorithms for topological data analysis.

Key Takeaways

- The field of topology studies the mapping of one space into another through continuous deformations.

- Machine learning algorithms produce functional mappings from an input space to an output space and lend themselves to be understood using the formalisms of topology.

- A topological approach allows you to study data sets without assuming a shape beforehand and to combine various machine learning techniques while maintaining guarantees about the underlying shape of the data.

David Beyer: Let’s get started by talking about your background and how you got to where you are today.

Gurjeet Singh: I am a mathematician and a computer scientist, originally from India. I got my start in the field at Texas Instruments, building integrated software and performing digital design. While at TI, I got to work on a project using clusters of specialized chips called Digital Signal Processors (DSPs) to solve computationally hard math problems.

As an engineer by training, I had a visceral fear of advanced math. I didn’t want to be found out as a fake, so I enrolled in the Computational Math program at Stanford. There, I was able to apply some of my DSP work to solving partial differential equations and demonstrate that a fluid dynamics researcher need not buy a supercomputer anymore; they could just employ a cluster of DSPs to run the system. I then spent some time in mechanical engineering building similar GPU-based partial differential equation solvers for mechanical systems. Finally, I worked in Andrew Ng’s lab at Stanford, building a quadruped robot and programming it to learn to walk by itself. Read more…

Augmenting the human experience: AR, wearable tech, and the IoT

As augmented reality technologies emerge, we must place the focus on serving human needs.

Register now for Solid Amsterdam, October 28, 2015 — space is limited.

Augmented reality (AR), wearable technology, and the Internet of Things (IoT) are all really about human augmentation. They are coming together to create a new reality that will forever change the way we experience the world. As these technologies emerge, we must place the focus on serving human needs.The Internet of Things and Humans

Tim O’Reilly suggested the word “Humans” be appended to the term IoT. “This is a powerful way to think about the Internet of Things because it focuses the mind on the human experience of it, not just the things themselves,” wrote O’Reilly. “My point is that when you think about the Internet of Things, you should be thinking about the complex system of interaction between humans and things, and asking yourself how sensors, cloud intelligence, and actuators (which may be other humans for now) make it possible to do things differently.”

I share O’Reilly’s vision for the IoTH and propose we extend this perspective and apply it to the new AR that is emerging: let’s take the focus away from the technology and instead emphasize the human experience.

The definition of AR we have come to understand is a digital layer of information (including images, text, video, and 3D animations) viewed on top of the physical world through a smartphone, tablet, or eyewear. This definition of AR is expanding to include things like wearable technology, sensors, and artificial intelligence (AI) to interpret your surroundings and deliver a contextual experience that is meaningful and unique to you. It’s about a new sensory awareness, deeper intelligence, and heightened interaction with our world and each other. Read more…

Building intelligent machines

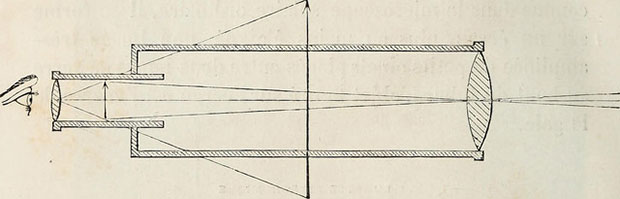

To understand deep learning, let’s start simple.

Use code DATA50 to get 50% off of the new early release of “Fundamentals of Deep Learning: Designing Next-Generation Artificial Intelligence Algorithms.” Editor’s note: This is an excerpt of “Fundamentals of Deep Learning,” by Nikhil Buduma.

The brain is the most incredible organ in the human body. It dictates the way we perceive every sight, sound, smell, taste, and touch. It enables us to store memories, experience emotions, and even dream. Without it, we would be primitive organisms, incapable of anything other than the simplest of reflexes. The brain is, inherently, what makes us intelligent.

The infant brain only weighs a single pound, but somehow, it solves problems that even our biggest, most powerful supercomputers find impossible. Within a matter of days after birth, infants can recognize the faces of their parents, discern discrete objects from their backgrounds, and even tell apart voices. Within a year, they’ve already developed an intuition for natural physics, can track objects even when they become partially or completely blocked, and can associate sounds with specific meanings. And by early childhood, they have a sophisticated understanding of grammar and thousands of words in their vocabularies.

For decades, we’ve dreamed of building intelligent machines with brains like ours — robotic assistants to clean our homes, cars that drive themselves, microscopes that automatically detect diseases. But building these artificially intelligent machines requires us to solve some of the most complex computational problems we have ever grappled with, problems that our brains can already solve in a manner of microseconds. To tackle these problems, we’ll have to develop a radically different way of programming a computer using techniques largely developed over the past decade. This is an extremely active field of artificial computer intelligence often referred to as deep learning. Read more…

The business value of unifying data

Practical applications of human-in-the-loop machine learning.

With hundreds, thousands, or even just tens of suppliers — each with different business units, payment terms, and locations — businesses are faced with a monumental task: unifying all of their supplier-related data, and fast so that it can be useful. In order to ask deep questions about their data, companies are increasingly looking for a single, unified view of their supply chain.

And yet, business data is often stored in different sources, systems, and formats, resulting in silos of information. These data silos take the form of enterprise resource planning systems, CSV files, spreadsheets, and relational databases. To pull together all of the data from these disparate sources, a business faces three interrelated challenges:

- Speed. Traditionally, businesses have attempted to catalog and organize supply chain data manually — profiling and integrating data themselves, which leads directly to the next challenge: cost.

- Cost. Manual work is expensive work. Usually more than one employee will need to work on the same data set in order to move quickly enough for the results to have any value for the business. Even with several employees working on the same data sets, this work will still not achieve what could be done on a machine scale.

- Efficiency. Relying completely on humans to organize and unify data is a situation ripe for error. Plus, there’s often no audit trail, and the work results in inherently incomplete views of information.

In a recent live demo by Dr. Clare Bernard, a field engineer at Tamr, I got a glimpse into how Tamr is using a combination of machine learning algorithms and input from subject matter experts to help businesses unify their data for analysis. A practice that uses short-term human intervention to actively improve machine models, human-in-the-loop machine learning is taking off across all types of industries, including fashion, automotive, and cloud services such as Google Maps. Read more…

Consensual reality

The data model of augmented reality is likely to be a series of layers, some of which we consent to share with others.

A couple of days ago, I had a walking meeting with Frederic Guarino to discuss virtual and augmented reality, and how it might change the entertainment industry.

At one point, we started discussing interfaces — would people bring their own headsets to a public performance? Would retinal projection or heads-up displays win?

One of the things we discussed was projections and holograms. Lighting the physical world with projected content is the easiest way to create an interactive, augmented experience: there’s no gear to wear, for starters. But will it work?

This stuff has been on my mind a lot lately. I’m headed to Augmented World Expo this week, and had a chance to interview Ori Inbar, the founder of the event, in preparation.

Among other things we discussed what Inbar calls his three rules for augmented reality design:

- The content you see has to emerge from the real world and relate to it.

- Should not distract you from the real world; must add to it.

- Don’t use it when you don’t need it. If a film is better on the TV watch the TV.

To understand the potential of augmented reality more fully, we need to look at the notion of consensual realities. Read more…

Artificial intelligence?

AI scares us because it could be as inhuman as humans.

Elon Musk started a trend. Ever since he warned us about artificial intelligence, all sorts of people have been jumping on the bandwagon, including Stephen Hawking and Bill Gates.

Although I believe we’ve entered the age of postmodern computing, when we don’t trust our software, and write software that doesn’t trust us, I’m not particularly concerned about AI. AI will be built in an era of distrust, and that’s good. But there are some bigger issues here that have nothing to do with distrust.

What do we mean by “artificial intelligence”? We like to point to the Turing test; but the Turing test includes an all-important Easter Egg: when someone asks Turing’s hypothetical computer to do some arithmetic, the answer it returns is incorrect. An AI might be a cold calculating engine, but if it’s going to imitate human intelligence, it has to make mistakes. Not only can it make mistakes, it can (indeed, must be) be deceptive, misleading, evasive, and arrogant if the situation calls for it.

That’s a problem in itself. Turing’s test doesn’t really get us anywhere. It holds up a mirror: if a machine looks like us (including mistakes and misdirections), we can call it artificially intelligent. That begs the question of what “intelligence” is. We still don’t really know. Is it the ability to perform well on Jeopardy? Is it the ability to win chess matches? These accomplishments help us to define what intelligence isn’t: it’s certainly not the ability to win at chess or Jeopardy, or even to recognize faces or make recommendations. But they don’t help us to determine what intelligence actually is. And if we don’t know what constitutes human intelligence, why are we even talking about artificial intelligence? Read more…

On the evolution of machine learning

From linear models to neural networks: an interview with Reza Zadeh.

Get notified when our free report, “Future of Machine Intelligence: Perspectives from Leading Practitioners,” is available for download. The following interview is one of many that will be included in the report.

As part of our ongoing series of interviews surveying the frontiers of machine intelligence, I recently interviewed Reza Zadeh. Reza is a Consulting Professor in the Institute for Computational and Mathematical Engineering at Stanford University and a Technical Advisor to Databricks. His work focuses on Machine Learning Theory and Applications, Distributed Computing, and Discrete Applied Mathematics.

Key Takeaways

- Neural networks have made a comeback and are playing a growing role in new approaches to machine learning.

- The greatest successes are being achieved via a supervised approach leveraging established algorithms.

- Spark is an especially well-suited environment for distributed machine learning.

David Beyer: Tell us a bit about your work at Stanford

Reza Zadeh: At Stanford, I designed and teach distributed algorithms and optimization (CME 323) as well as a course called discrete mathematics and algorithms (CME 305). In the discrete mathematics course, I teach algorithms from a completely theoretical perspective, meaning that it is not tied to any programming language or framework, and we fill up whiteboards with many theorems and their proofs. Read more…

Our future sits at the intersection of artificial intelligence and blockchain

The O'Reilly Radar Podcast: Steve Omohundro on AI, cryptocurrencies, and ensuring a safe future for humanity.

Subscribe to the O’Reilly Radar Podcast to track the technologies and people that will shape our world in the years to come.

I met up with Possibility Research president Steve Omohundro at our Bitcoin & the Blockchain Radar Summit to talk about an interesting intersection: artificial intelligence (AI) and blockchain/cryptocurrency technologies. This Radar Podcast episode features our discussion about the role cryptocurrency and blockchain technologies will play in the future of AI, Omohundro’s Self Aware Systems project that aims to ensure intelligent technologies are beneficial for humanity, and his work on the Pebble cryptocurrency.

Synthesizing AI and crypto-technologies

Bitcoin piqued Omohundro’s interest from the very start, but his excitement built as he started realizing the disruptive potential of the technology beyond currency — especially the potential for smart contracts. He began seeing ways the technology will intersect with artificial intelligence, the area of focus for much of his work:

I’m very excited about what’s happening with the cryptocurrencies, particularly Ethereum. I would say Ethereum is the most advanced of the smart contracting ideas, and there’s just a flurry of insights, and people are coming up every week with, ‘Oh we could use it to do this.’ We could have totally autonomous corporations running on the blockchain that copy what Uber does, but much more cheaply. It’s like, ‘Whoa what would that do?’

I think we’re in a period of exploration and excitement in that field, and it’s going to merge with the AI systems because programs running on the blockchain have to connect to the real world. You need to have sensors and actuators that are intelligent, have knowledge about the world, in order to integrate them with the smart contracts on the blockchain. I see a synthesis of AI and cryptocurrencies and crypto-technologies and smart contracts. I see them all coming together in the next couple of years.