- Maintain Separate Github Accounts — simple advice.

- Cooper-Hewitt Pen Data — anonymized data from the Cooper-Hewitt design museum’s fantastic pen.

- Zero Rating’s Problem — Wikipedia was zero-rated for Angola, so Angolans began swapping movies via Wikipedia. Zero rating (“no data charge for this service”) is an incentive to use the site, not necessarily for the purpose intended.

- Motion Design is the Future of UI — Motion tells stories. Everything in an app is a sequence, and motion is your guide. Someone caught the animations and transitions bug.

"software architecture" entries

Four short links: 24 March 2016

Work and Home Github, Museum Data, Bandwidth Incentives, and Motion Design

Contrasting architecture patterns with design patterns

How both kinds of patterns can add clarity and understanding to your project.

Developers are accustomed to design patterns, as popularized in the book Design Patterns by Gamma, et al. Each pattern describes a common problem posed in object-oriented software development along with a solution, visualized via class diagrams. In the Software Architecture Fundamentals workshop, Mark Richards & I discuss a variety of architecture patterns, such as Layered, Micro-Kernel, SOA, etc. However, architecture patterns differ from design patterns in several important ways.

Components rather than classes

Architectural elements tend towards collections of classes or modules, generally represented as boxes. Diagrams about architecture represent the loftiest level looking down, whereas class diagrams are at the most atomic level. The purpose of architecture patterns is to understand how the major parts of the system fit together, how messages and data flow through the system, and other structural concerns.

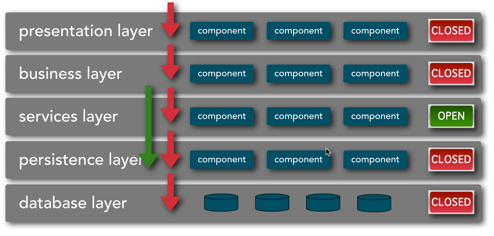

Architecture diagrams tend to be less rigidly defined than class diagrams. For example, many times the purpose of the diagram is to show one aspect of the system, and simple iconography works best. For example, one aspect of the Layered architecture pattern is whether the layers are closed (only accessible from the superior layer) or open (allowed to bypass the layer if no value added), as shown in Figure 1.

Figure 1: Layered architecture with mixed closed and open layers

This feature of the architecture isn’t the most important part, but is important to call out because if affects the efficacy of this pattern. For example, if developers violate this principle (e.g., performing queries from the presentation layer directly to the data layer), it compromises the separation of concerns and layer isolation that are the prime benefits of this pattern. Often an architectural pattern consists of several diagrams, each showing an important dimension.

Building applications in Azure

Identifying the key requirements of a web application cloud architecture.

Download Azure for Developers.

Download a free copy of “Azure for Developers,” an O’Reilly report by experienced .NET developer John Adams that breaks down Microsoft’s Azure platform in plain language, so that you can quickly get up to speed.

One of the most natural uses of the cloud is for web applications. You may already be using virtual machines on your own systems to make deploying your applications easier, either to new hardware or to additional servers. Microsoft Azure uses virtualization too, but it also brings useful benefits that virtualization cannot deliver alone. By hosting your application in the cloud, you can leverage automatic scaling, load balancing, system health monitoring, and logging. You also benefit from the fact that managed cloud platforms help narrow the attack surface of your system by automatically patching the operating system and runtimes and by keeping systems sandboxed. Let’s look at some examples of how to build some common web applications inside of Microsoft Azure.

Online store

Imagine that you work for a retailer who generates a significant amount of revenue through online sales. Imagine also that this retailer has been around for long enough that it already has an established web architecture that runs in a private data center. This retailer has decided that it wants to move to a hosted platform so that it no longer has any data center responsibilities and it can focus on its core business. How do you replatform this web application into Microsoft Azure? Let’s first identify some requirements for this system:

- It has high utilization and needs to serve a large number of concurrent users without timing out, even during peak hours such as Black Friday sales.

- It needs to accommodate a wide variety of products in its database that do not necessarily all follow the same schema.

- It needs a fast and intelligent search bar so that customers can find products easily.

- It needs to be able to recommend products to customers as they shop to help generate additional revenue.

However these requirements are being met today in the private data center, I can suggest some guidelines on how to reproduce this system in Microsoft Azure so you can boost performance instead of just replicating it. I will take each of these requirements in order and explain how to leverage certain Azure components so that these requirements are properly met.

4 reasons why microservices resonate

Microservices optimize evolutionary change at a granular level.

We just finished the first O’Reilly Software Architecture Conference and the overwhelming most popular topic was microservices. Why all the hype about an architectural style?

Microservices are the first post-DevOps revolution architecture.

The DevOps revolution highlighted how much inadvertent friction an outdated operations mindset can cause, starting the move towards automating away manual tasks. By automating chores like machine provisioning and deployments, it suddenly became cheap to make changes that used to be expensive. Some architects properly viewed this new capability as a super power, and built architectures that fully embraced the operational aspects of their design. The Microservice architectural style prioritizes operational concerns as one of the key aspects of the architecture.

Microservice architectures borrow a design aesthetic from Domain Driven Design called the Bounded Context. A bounded context encapsulates all internal details of that domain and has explicit integration points with other bounded contexts. Microservice architectures reify the logical DDD bounded context into physical architecture. For example, it is common in microservice architectures for services that must persist data to own their database: members of the service team handle provisioning, backups, schema, migration, etc. In other words, in microservice architectures, the bounded context is also a physical context. But that also means that this service implementation isn’t coupled to any other team’s implementation, clearing the path for independent evolution. I recently published some writing about the recent realization that architecture is abstract until operationalized. In other words, until you have deployed an architecture and upgraded parts of it, you don’t fully understand it.

The shape of software architecture

Five things we learned from the O’Reilly Software Architecture Conference 2015.

Within this piece you’ll find my takeaways and lessons learned from the event. I expect these initial impressions to both shape our upcoming exploration of software architecture and be shaped by continued shifts within software architecture.

Read more…

How the DevOps revolution informs software architecture

The O'Reilly Radar Podcast: Neal Ford on the changing role of software architects and the rise of microservices.

In this episode of the Radar Podcast, O’Reilly’s Mac Slocum sits down with Neal Ford, a software architect and meme wrangler at ThoughtWorks, to talk about the changing role of software architects. They met up at our recent Software Architecture Conference in Boston — if you missed the event, you can sign up to be notified when the Complete Video Compilation of all sessions and talks is available.

Slocum started the conversation with the basics: what, exactly, does a software architect do. Ford noted that there’s not a straightforward answer, but that the role really is a “pastiche” of development, soft skills and negotiation, and solving business domain problems. He acknowledged that the role historically has been negatively perceived as a non-coding, post-useful, ivory tower deep thinker, but noted that has been changing over the past five to 10 years as the role has evolved into real-world problem solving, as opposed to operating in abstractions:

“One of the problems in software, I think, is that you build everything on towers of abstractions, and so it’s very easy to get to the point where all you’re doing is playing with abstractions, and you don’t reify that back to the real world, and I think that’s the danger of this kind of ivory-tower architect. When you start looking at things like continuous delivery and continuous deployment, you have to take those operational concerns into account, and I think that is making the role of architect a lot more relevant now, because they are becoming much more involved in the entire software development ecosystem, not just the front edge of it.”

Signals from the O’Reilly Software Architecture Conference 2015

From careers to culture to code, here are key insights from the O'Reilly Software Architecture Conference 2015.

Experts from across the software architecture world came together in Boston for the O’Reilly Software Architecture Conference 2015. Below we’ve assembled notable keynotes, interviews, and insights from the event.

Software architects: post-“post-useful”

The old notion of a software architect being a non-coding, post-useful deep thinker is giving way to something far more interesting, says Neal Ford, software architect and meme wrangler at ThoughtWorks. “Architecture has become much more interesting now because it’s become more encompassing … it’s trying to solve real problems rather than play with abstractions.”

How CI removes the pain

The tradeoffs of (accidentally) discarding continuous integration.

I’ve given a continuous delivery workshop a few times with ThoughtWorks Chief Scientist Martin Fowler, who tells an interesting story about continuous integration, from the first software project he ever saw. When Martin was a teenager, his father had a friend who was running a software project, and he gave Martin the nickel (or five pence) tour — a bunch of men, predominately on mainframe terminals, working in an old warehouse. Martin remarked that the thing that struck him the most was when the guide told him that all the developers were “currently integrating all their code.” They had finished coding six months prior, yet despite that they weren’t sure when they were going to be done “integrating.”

That revelation surprised Martin: in his mind, software development was a discreet, scientific, deterministic process, not at all represented by the vague comments of these developers. But the practice of as-late-as-possible integration was common back in an earlier era of software development. If you look at software engineering texts of the ’60s and ’70s, every project included an integration phase. This isn’t how we think of integration today, which happens at the granularity of services and applications. Rather, a common practice was to have developers code in isolation for weeks and months at a time, then integrate all their code together into a cohesive whole. And that phase was, not surprisingly, a painful part of most projects. Yet, that type of 60s and 70s workflow is still codified in some version control tools today, even though we have now determined that late integration is the opposite of how we should approach this problem.

3 easy reasons why you’ll move your business to the cloud

Migrating to cloud-native application architectures leads to innovation.

Editor’s note: this is an advance excerpt from Chapter 1 of the forthcoming Migrating to Cloud-Native Application Architectures by Matt Stine. This report examines how the cloud enables innovation and the changes an enterprise must consider when adopting cloud-native application architectures.

Let’s examine the common motivations behind moving to cloud-native application architectures.

Speed

It’s become clear that speed wins in the marketplace. Businesses that are able to innovate, experiment, and deliver software-based solutions quickly are outcompeting those that follow more traditional delivery models.

In the enterprise, the time it takes to provision new application environments and deploy new versions of software is typically measured in days, weeks, or months. This lack of speed severely limits the risk that can be taken on by any one release, because the cost of making and fixing a mistake is also measured on that same timescale.

Internet companies are often cited for their practice of deploying hundreds of times per day. Why are frequent deployments important? If you can deploy hundreds of times per day, you can recover from mistakes almost instantly. If you can recover from mistakes almost instantly, you can take on more risk. If you can take on more risk, you can try wild experiments—the results might turn into your next competitive advantage.

The elasticity and self-service nature of cloud-based infrastructure naturally lends itself to this way of working. Provisioning a new application environment by making a call to a cloud service API is faster than a form-based manual process by several orders of magnitude. Deploying code to that new environment via another API call adds more speed. Adding self-service and hooks to teams’ continuous integration/build server environments adds even more speed. Eventually we can measure the answer to Lean guru Mary Poppendick’s question, “How long would it take your organization to deploy a change that involves just one single line of code?” in minutes or seconds.

Imagine what your team… what your business… could do if you were able to move that fast!

Variations in event-driven architecture

Find out if mediator or broker topology is right for you.

Editor’s note: this is an advance excerpt from Chapter 2 of the forthcoming Software Architecture Patterns by Mark Richards. This report looks at the patterns that define the basic characteristics and behavior of highly scalable and highly agile applications, and will be made available to download in advance of our Software Architecture Conference happening March 16-19 in Boston.

The event-driven architecture pattern is a popular distributed asynchronous architecture pattern used to produce highly scalable applications. It is also highly adaptable and can be used for both small and large, complex applications. The pattern is made up of highly decoupled, single-purpose event processing components that asynchronously receive and process events.

The event-driven architecture pattern consists of two main topologies, the mediator and the broker. The mediator topology is commonly used when you need to orchestrate multiple steps within an event through a central mediator, whereas the broker topology is used when you want to chain events together without the use of a central mediator. Because the architecture characteristics and implementation strategies differ between these two topologies, it is important to understand each one to know which is best suited for your particular situation.