"BioCoder" entries

Open source lessons for synthetic biology

What bio can learn from the open source work of Tesla, Google, and Red Hat.

When building a biotech start-up, there is a certain inevitability to every conversation you will have. For investors, accelerators, academics, friends, baristas, the first two questions will be: “what do you want to do?” and “have you got a patent yet?”

Almost everything revolves around getting IP protection in place, and patent lawyer meetings are usually the first sign that your spin-off is on the way. But what if there was a way to avoid the patent dance, relying instead on implementation? It seems somewhat utopian, but there is a precedent in the technology world: open source.

What is open source? Essentially, any software in which the source code (the underlying program) is available to anyone else to modify, distribute, etc. This means that, unlike typical proprietary development processes, it lends itself to collaborative development between larger groups, often spread out across large distances. From humble beginnings, the open source movement has developed to the point of providing operating systems (e.g. Linux), Internet browsers (Firefox), 3D modelling software (Blender), monetary alternatives (Bitcoin), and even integrating automation systems for your home (OpenHab).

Money, money, money…

The obvious question is then, “OK, but how do they make money?” The answer to this lies not in attempting to profit from the software code itself, but rather from its implementation as well as the applications which are built on top of it. For the implementation side, take Red Hat Inc., a multinational software company in the S&P 500 with a market cap of $14.2 billion, who produce the extremely popular Red Hat Enterprise Linux distribution. Although open source and freely available, Red Hat makes its money by selling a thoroughly bug-tested operating system and then contracting to provide support for 10 years. Thus, businesses are not buying the code; they are buying a rapid response to any problems.

ResourceMiner: Toppling the Tower of Babel in the lab

An open source project aims to crowdsource a common language for experimental design.

Contributing author: Tim Gardner

Editor’s note: This post originally appeared on PLOS Tech; it is republished here with permission.

From Gutenberg’s invention of the printing press to the Internet of today, technology has enabled faster communication, and faster communication has accelerated technology development. Today, we can zip photos from a mountaintop in Switzerland back home to San Francisco with hardly a thought, but that wasn’t so trivial just a decade ago. It’s not just selfies that are being sent; it’s also product designs, manufacturing instructions, and research plans — all of it enabled by invisible technical standards (e.g., TCP/IP) and language standards (e.g., English) that allow machines and people to communicate.

But in the laboratory sciences (life, chemical, material, and other disciplines), communication remains inhibited by practices more akin to the oral traditions of a blacksmith shop than the modern Internet. In a typical academic lab, the reference description of an experiment is the long-form narrative in the “Materials and Methods” section of a paper or a book. Similarly, industry researchers depend on basic text documents in the form of Standard Operating Procedures. In both cases, essential details of the materials and protocol for an experiment are typically written somewhere in a long-forgotten, hard-to-interpret lab notebook (paper or electronic). More typically, details are simply left to the experimenter to remember and to the “lab culture” to retain.

At the dawn of science, when a handful of researchers were working on fundamental questions, this may have been good enough. But nowadays this archaic method of protocol record keeping and sharing is so lacking that half of all biomedical studies are estimated to be irreproducible, wasting $28 billion each year of U.S. government funding. With more than $400 billion invested each year in biological and chemical research globally, the full cost of irreproducible research to the public and private sector worldwide could be staggeringly large. Read more…

Imagining a future biology

We need to nurture our imaginations to fuel the biological revolution.

Download the new issue of BioCoder, our newsletter covering the biological revolution.

I recently took part in a panel at Engineering Biology for Science & Industry, where I talked about the path biology is taking as it becomes more and more of an engineering discipline, and how understanding the path electronics followed will help us build our own hopes and dreams for the biological revolution.

I recently took part in a panel at Engineering Biology for Science & Industry, where I talked about the path biology is taking as it becomes more and more of an engineering discipline, and how understanding the path electronics followed will help us build our own hopes and dreams for the biological revolution.

In 1901, Marconi started the age of radio, and really the age of electronics, with the “first” trans-atlantic radio transmission. It’s an interesting starting point, because important as this event was, there’s good reason to believe that it never really happened. We know a fair amount about the equipment and the frequencies Marconi was using: we know how much power he was transmitting (a lot), how sensitive his receiver was (awful), and how good his antenna was (not very).

We can compute the path loss for a signal transmitted from Cornwall to Newfoundland, subtract that from the strength of the signal he transmitted, and compare that to the sensitivity of the receiver. If you do that, you have to conclude that Marconi’s famous reception probably never happened. If he succeeded, it was by means of a fortunate accident: there are several possible explanations for how a radio signal could have made it to Marconi’s site in Newfoundland. All of these involve phenomenon that Marconi couldn’t have known about. If he succeeded, his equipment, along with the radio waves themselves, were behaving in ways that weren’t understood at the time, though whether he understood what was happening makes no difference. So, when he heard that morse code S, did he really hear it? Did he imagine it? Did he lie? Or was he very lucky? Read more…

Smart collaborations beget smart solutions

Software developers and hardware engineers team up with biologists to address laboratory inefficiencies.

Download a free copy of the new edition of BioCoder, our newsletter covering the biological revolution.

Following the Synthetic Biology Leadership Excellence Accelerator Program (LEAP) showcase, I met with fellows Mackenzie Cowell, co-founder of DIYbio.org, and Edward Perello, co-founder of Desktop Genetics. Cowell and Perello both wanted to know what processes in laboratory research are inefficient and how we can eliminate or optimize them.One solution we’re finding promising is pairing software developers and hardware engineers with biologists in academic labs or biotech companies to engineer small fixes, which could result in monumental increases in research productivity.

An example of an inefficient lab process that has yet to be automated is fruit fly — Drosophila — manipulation. Drosophila handling and maintenance is laborious, and Dave Zucker and Matt Zucker from Flysorter are developing a technology using computer vision and machine learning software to automate these manual tasks; the team is currently engineering prototypes. This is a perfect example of engineers developing a technology to automate a completely manual and extremely tedious laboratory task. Check them out, and stay tuned for an article from them in the October issue of BioCoder. Read more…

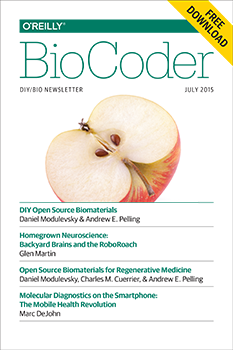

Announcing BioCoder issue 8

BioCoder 8: neuroscience, robotics, gene editing, microbiome sequence analysis, and more.

Download a free copy of the new edition of BioCoder, our newsletter covering the biological revolution.

We are thrilled to announce the eighth issue of BioCoder. This marks two years of diverse, educational, and cutting-edge content, and this issue is no exception. Highlighted in this issue are technologies and tools that span neuroscience, diagnostics, robotics, gene editing, microbiome sequence analysis, and more.Glen Martin interviewed Tim Marzullo, co-founder of Backyard Brains, to learn more about how their easy-to-use kits, like the RoboRoach, demonstrate how nervous systems work.

Marc DeJohn from Biomeme discusses their smartphone diagnostics technology for on-site gene detection of disease, biothreat targets, and much more.

Daniel Modulevsky, Charles Cuerrier, and Andrew Pelling from Pelling Lab at the University of Ottawa discuss different types of open source biomaterials for regenerative medicine and their use of de-cellularized apple tissue to generate 3D scaffolds for cells. If you follow their tutorial, you can do it, too!

aBioBot, highlighted by co-founder Raghu Machiraju, is a device that uses visual sensing and feedback to perform encodable laboratory tasks. Machiraju argues that “progress in biotechnology will come from the use of open user interfaces and open-specification middleware to drive and operate flexible robotic platforms.” Read more…

BioBuilder: Rethinking the biological sciences as engineering disciplines

Moving biology out of the lab will enable new startups, new business models, and entirely new economies.

Buy “BioBuilder: Synthetic Biology in the Lab,” by Natalie Kuldell PhD., Rachel Bernstein, Karen Ingram, and Kathryn M. Hart.

What needs to happen for the revolution in biology and the life sciences to succeed? What are the preconditions?

I’ve compared the biorevolution to the computing revolution several times. One of the most important changes was that computers moved out of the lab, out of the machine room, out of that sacred space with raised floors, special air conditioning, and exotic fire extinguishers, into the home. Computers stopped being things that were cared for by an army of priests in white lab coats (and that broke several times a day), and started being things that people used. Somewhere along the line, software developers stopped being people with special training and advanced degrees; children, students, non-professionals — all sorts of people — started writing code. And enjoying it.

Biology is now in a similar place. But to take the next step, we have to look more carefully at what’s needed for biology to come out of the lab. Read more…

Democratizing biotech research

The O'Reilly Radar Podcast: DJ Kleinbaum on lab automation, virtual lab services, and tackling the challenges of reproducibility.

The convergence of software and hardware, and the growing ubiquitousness of the Internet of Things is affecting industry across the board, and biotech labs are no exception. For this Radar Podcast episode, I chatted with DJ Kleinbaum, co-founder of Emerald Therapeutics, about lab automation, the launch of Emerald Cloud Laboratory, and the problem of reproducibility.

Kleinbaum and his co-founder Brian Frezza started Emerald Therapeutics to research cures for persistent viral infections. They didn’t set out to spin up a second company, but their efforts to automate their own lab processes proved so fruitful, they decided to launch a virtual lab-as-a-service business, Emerald Cloud Laboratory. Kleinbaum explained:

“When Brian and I started the company right out of graduate school, we had this platform anti-viral technology, which the company is still working on, but because we were two freshly minted nobody Ph.D.s, we were not going to be able to raise the traditional $20 or $30 million that platform plays raise in the biotech space.

“We knew that we had to be much more efficient with the money we were able to raise. Brian and I both have backgrounds in computer science. So, from the beginning, we were trying to automate every experiment that our scientists ran, such that every experiment was just push a button, walk away. It was all done with process automation and robotics. That way, our scientists would be able to be much more efficient than your average bench chemist or biologist at a biotech company.

“After building that system internally for three years, we looked at it and realized that every aspect of a life sciences laboratory had been encapsulated in both hardware and software, and that that was too valuable a tool to just keep internally at Emerald for our own research efforts. Around this time last year, we decided that we wanted to offer that as a service, that other scientists, companies, and researchers could use to run their experiments as well.” Read more…

Bringing an end to synthetic biology’s semantic debate

The O'Reilly Radar Podcast: Tim Gardner on the synthetic biology landscape, lab automation, and the problem of reproducibility.

Editor’s note: this podcast is part of our investigation into synthetic biology and bioengineering. For more on these topics, download a free copy of the new edition of BioCoder, our quarterly publication covering the biological revolution. Free downloads for all past editions are also available.

Tim Gardner, founder of Riffyn, has recently been working with the Synthetic Biology Working Group of the European Commission Scientific Committees to define synthetic biology, assess the risk assessment methodologies, and then describe research areas. I caught up with Gardner for this Radar Podcast episode to talk about the synthetic biology landscape and issues in research and experimentation that he’s addressing at Riffyn.

Defining synthetic biology

Among the areas of investigation discussed at the EU’s Synthetic Biology Working Group was defining synthetic biology. The official definition reads: “SynBio is the application of science, technology and engineering to facilitate and accelerate the design, manufacture and/or modification of genetic materials in living organisms.” Gardner talked about the significance of the definition:

“The operative part there is the ‘design, manufacture, modification of genetic materials in living organisms.’ Biotechnologies that don’t involve genetic manipulation would not be considered synthetic biology, and more or less anything else that is manipulating genetic materials in living organisms is included. That’s important because it gets rid of this semantic debate of, ‘this is synthetic biology, that’s synthetic biology, this isn’t, that’s not,’ that often crops up when you have, say, a protein engineer talking to someone else who is working on gene circuits, and someone will claim the protein engineer is not a synthetic biologist because they’re not working with parts libraries or modularity or whatnot, and the boundaries between the two are almost indistinguishable from a practical standpoint. We’ve wrapped it all together and said, ‘It basically advances in the capabilities of genetic engineering. That’s what synthetic biology is.'”

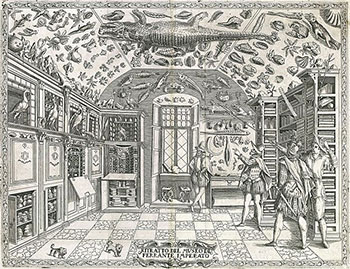

Beyond lab folklore and mythology

What the future of science will look like if we’re bold enough to look beyond centuries-old models.

Editor’s note: this post is part of our ongoing investigation into synthetic biology and bioengineering. For more on these areas, download the latest free edition of BioCoder.

Over the last six months, I’ve had a number of conversations about lab practice. In one, Tim Gardner of Riffyn told me about a gene transformation experiment he did in grad school. As he was new to the lab, he asked two more experienced scientists for their protocol: one said it must be done exactly at 42 C for 45 seconds, the other said exactly 37 C for 90 seconds. When he ran the experiment, Tim discovered that the temperature actually didn’t matter much. A broad range of temperatures and times would work.

In an unrelated conversation, DJ Kleinbaum of Emerald Cloud Lab told me about students who would only use their “lucky machine” in their work. Why, given a choice of lab equipment, did one of two apparently identical machines give “good” results for a some experiment, while the other one didn’t? Nobody knew. Perhaps it is the tubing that connects the machine to the rest of the experiment; perhaps it is some valve somewhere; perhaps it is some quirk of the machine’s calibration.

The more people I talked to, the more stories I heard: labs where the experimental protocols weren’t written down, but were handed down from mentor to student. Labs where there was a shared common knowledge of how to do things, but where that shared culture never made it outside, not even to the lab down the hall. There’s no need to write it down or publish stuff that’s “obvious” or that “everyone knows.” As someone who is more familiar with literature than with biology labs, this behavior was immediately recognizable: we’re in the land of mythology, not science. Each lab has its own ritualized behavior that “works.” Whether it’s protocols, lucky machines, or common knowledge that’s picked up by every student in the lab (but which might not be the same from lab to lab), the process of doing science is an odd mixture of rigor and folklore. Everybody knows that you use 42 C for 45 seconds, but nobody really knows why. It’s just what you do.

Despite all of this, we’ve gotten fairly good at doing science. But to get even better, we have to go beyond mythology and folklore. And getting beyond folklore requires change: changes in how we record data, changes in how we describe experiments, and perhaps most importantly, changes in how we publish results. Read more…

Announcing BioCoder issue 6

BioCoder 6: iGEM's first Giant Jamboree, an update from the #ScienceHack Hack-a-thon, the Open qPCR project, and more.

Once again, we’re interested in your ideas and in new content, so if you have an article or a proposal for an article, send it in to BioCoder@oreilly.com. We’re very interested in what you’re doing. There are many, many fascinating projects that aren’t getting media attention. We’d like to shine some light on those. If you’re running one of them — or if you know of one, and would like to hear more about it — let us know. We’d also like to hear more about exciting start-ups. Who do you know that’s doing something amazing? And if it’s you, don’t be shy: tell us.

Above all, don’t hesitate to spread the word. BioCoder was meant to be shared. Our goal with BioCoder is to be the nervous system for a large and diverse but poorly connected community. We’re making progress, but we need you to help make the connections.